The landscape of Retrieval Augmented Generation (RAG) is undergoing a seismic shift, and it’s happening without a single vector in sight. A recent development, dubbed ‘Vectorless RAG,’ has thrown out the traditional embedding-based approach—the very foundation of most current RAG systems—and replaced it with a sophisticated three-stage tree-and-reasoning architecture. The results speak for themselves: a staggering 98.7% accuracy on the FinanceBench benchmark. This isn’t just an incremental improvement; it’s a fundamental rethinking of how large language models access and process information.

This new model hinges on what’s termed a ‘tree-and-reasoning’ approach. Instead of converting entire documents into dense vector representations and then searching for semantic similarity, this system constructs hierarchical document trees. Think of it like an incredibly detailed outline, but for raw data. The retrieval process then becomes a guided traversal through this tree structure, orchestrated by the LLM itself. This allows for a more nuanced and context-aware navigation of information, rather than relying on the often-blunt instrument of vector similarity.

Why Ditch Embeddings Entirely?

The conventional wisdom in RAG has been to embed everything. Documents are chunked, converted into numerical vectors, and stored in a vector database. When a query comes in, it’s also embedded, and the system searches for the most similar vectors, retrieving the corresponding text chunks. This works, to a point. But it struggles with highly structured data, nuanced queries, or situations where the precise location and hierarchy of information are paramount. The problem with embeddings, as any data scientist who’s wrestled with hyperparameter tuning knows, is that they’re approximations. They capture semantic meaning, but they can lose the finer points of structure and relationships. And in domains like finance, where precision is everything, approximations can lead to costly errors.

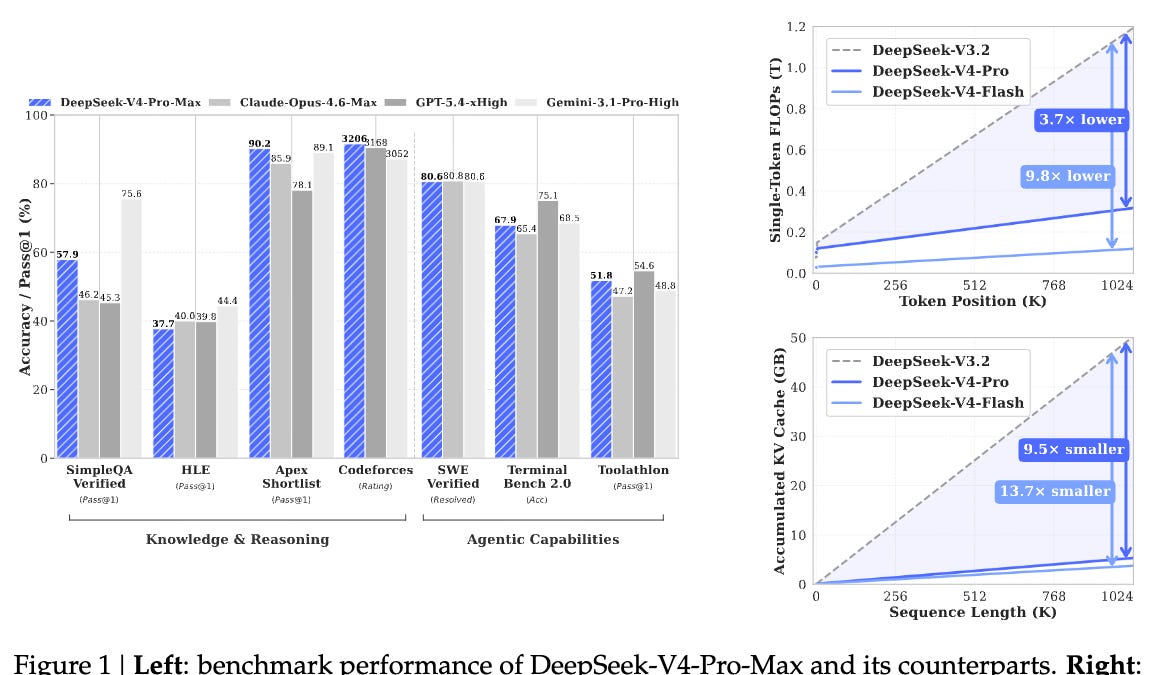

The 98.7% FinanceBench score isn’t just a data point; it’s a flashing neon sign. FinanceBench is designed to test a model’s ability to answer complex financial questions, requiring it to synthesize information from various documents and understand complex relationships. To achieve such a high score without the crutch of embeddings suggests a fundamental superiority in how this Vectorless RAG architecture understands and retrieves information. It implies that for certain critical applications, especially those demanding high accuracy and explainability, the traditional vector approach might be obsolete.

PageIndex, hierarchical document trees, LLM-guided traversal, and the 98.7% FinanceBench result — how reasoning-based retrieval works, why it’s a game changer for RAG, and what it means for the future of AI information retrieval.

This isn’t to say that vector embeddings are dead. For general-purpose chatbots or summarization tasks, they’re likely to remain a cost-effective and powerful tool. But for specialized, high-stakes applications where the cost of error is substantial, this move towards explicit structural representation and LLM-driven reasoning is compelling. It represents a maturation of RAG, moving beyond brute-force semantic matching to a more intelligent, purpose-built retrieval mechanism.

What Does This Mean for Developers?

The implications for developers and data engineers are significant. Implementing a Vectorless RAG system requires a different mindset and different tools. Instead of focusing on embedding models and vector database optimization, the emphasis shifts to strong document parsing, efficient tree construction algorithms, and sophisticated prompt engineering to guide the LLM through the data structure. This isn’t necessarily easier, but it offers a path to higher accuracy in demanding use cases. The challenge will be in creating scalable and maintainable systems that can handle the complexity of these hierarchical structures and LLM interactions.

Furthermore, the ability of the LLM to guide the traversal suggests a more interpretable RAG process. Instead of just returning a list of similar chunks, the LLM’s ‘reasoning’ can be exposed, showing why it chose certain paths through the document tree. This transparency is invaluable in regulated industries like finance, where understanding the provenance of an answer is as important as the answer itself.

The core innovation here is the shift from implicit semantic similarity to explicit structural reasoning. It’s a move that acknowledges the limitations of purely vector-based approaches and offers a more tailored solution for complex information retrieval. While the journey to widespread adoption will likely involve significant engineering effort and standardization, the early results from Vectorless RAG are too impressive to ignore. This might just be the future of RAG, and it’s a future where embeddings are, in some critical areas, no longer required.