Eighteen months ago, the author rolled out their first production-grade Retrieval-Augmented Generation (RAG) system. Not a pilot, not a proof-of-concept, but a system live in the wild, grappling with real-world data at Unilever.

And the immediate takeaway? It’s not about the AI model itself, or the vector database. Those are just components. The real battleground for RAG, like so many enterprise AI deployments, lies in the data—specifically, its management, its lineage, and its sheer, unyielding volume.

The Unseen Data Infrastructure

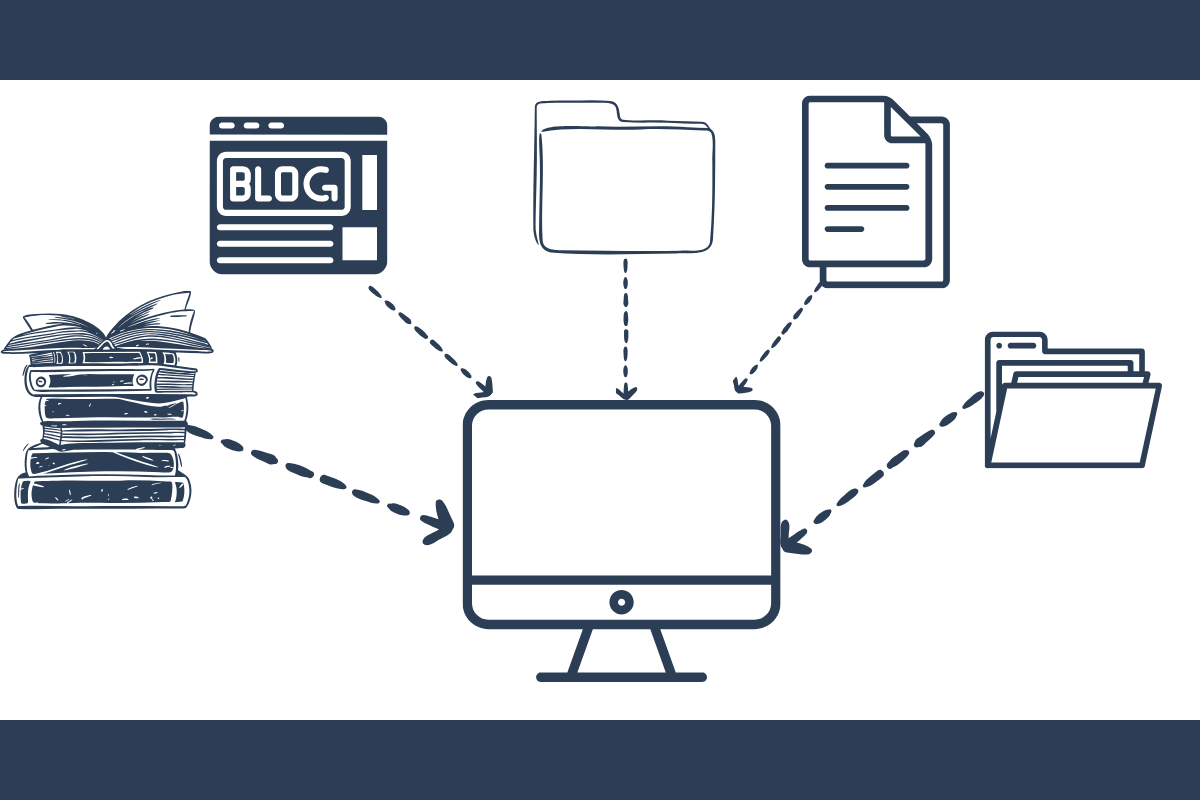

Here’s the thing: the headlines scream about LLM capabilities, about zero-shot learning and parameter tuning. But for a system designed to ground those powerful models in proprietary knowledge, the bedrock is far less glamorous. It’s about ensuring the right documents are ingested, correctly chunked, and accurately embedded. It’s about knowing which version of a product specification was used, when it was updated, and why. Without this meticulous data hygiene, your RAG system becomes a probabilistic guesser, not a reliable knowledge assistant.

The author’s candid reflection highlights a common blind spot in the AI gold rush. Companies are so eager to deploy the generative component that they often underestimate the Herculean task of preparing the retrieval component. This means the metadata must be pristine, the document parsing strong, and the indexing strategy adaptable. It’s the unsexy plumbing that makes the glamorous AI faucet actually work.

Shifting Gears: From Build to Maintain

So, what would they do differently? For starters, a much earlier and more aggressive focus on data pipelines and monitoring. This isn’t a set-it-and-forget-it architecture. Production RAG demands constant vigilance. Think about it: a single malformed document in the ingestion pipeline can, in theory, corrupt the entire knowledge base that the LLM relies upon.

This requires a shift in mindset from pure development to ongoing operations. You need systems in place to automatically detect data drift, to flag anomalies in retrieval relevance, and to provide clear audit trails. The author explicitly points out the need for sophisticated tooling to manage the knowledge base lifecycle – not just building it, but curating, updating, and retiring data over time. This lifecycle management, often an afterthought, is the difference between a production system and a data swamp.

“We were so focused on the prompt engineering and model performance, we underestimated the foundational need for strong data management and continuous monitoring. The data is the foundation; the LLM is just the house built on it.”

This quote, stark in its simplicity, cuts to the core of enterprise AI challenges. It’s not just about the cutting-edge LLM; it’s about the diligent, often painstaking, work of preparing and maintaining the information that LLM will query.

Why Did This RAG System Need Redesigning?

The core of the original article’s introspection points to the overwhelming realization that building the RAG system was only the first step. The subsequent, and arguably more critical, phase involves its operationalization and continuous improvement, which was inadequately planned for. This includes:

- Scalability: Ensuring the system could handle increasing data volumes and user queries without performance degradation.

- Maintainability: Designing for ease of updates, bug fixes, and integration with other enterprise systems.

- Monitoring: Implementing comprehensive tracking of retrieval accuracy, generation quality, and system uptime.

- Data Governance: Establishing clear protocols for data ingestion, validation, and lifecycle management to ensure data integrity and compliance.

The author’s retrospective isn’t a failure announcement; it’s a proof to the iterative nature of complex software engineering, especially in the nascent field of AI applications. It’s a valuable data point for any organization contemplating its own RAG deployments, underscoring the necessity of a holistic approach that prioritizes data infrastructure as much as the AI model itself.

What’s the Real Cost of Bad RAG Data?

Beyond the engineering overhead, the tangible impact of a poorly managed RAG system can be significant. Inaccurate information surfacing to employees or, worse, customers, can lead to flawed decision-making, reputational damage, and lost revenue. For instance, if a sales team relies on outdated product specifications surfaced by a RAG system, they might make incorrect promises, leading to dissatisfied clients and contractual issues. Similarly, if an internal support bot provides incorrect troubleshooting steps due to a flawed knowledge base, it wastes employee time and hinders productivity. The author’s experience at Unilever, while not explicitly detailing financial losses, strongly implies that the cost of correcting such errors—both in time and in lost trust—far outweighs the investment in strong data management from the outset.

This isn’t just about building a clever chatbot. It’s about building a reliable information retrieval engine. And that engine needs fuel – high-quality, well-organized, continuously managed fuel. The market is rapidly shifting from ‘can we build it?’ to ‘can we scale and maintain it reliably?’. Unilever’s experience, as articulated here, is a crucial data point in that ongoing conversation.