Here’s a number to make you stop scrolling: four gigabytes. That’s roughly the size of the Gemini Nano Large Language Model Google has reportedly, and quietly, slipped into the latest Chrome browser updates. Think about that for a second. Billions of users, across the planet, now have this hefty piece of artificial intelligence running in the background, whether they asked for it or not.

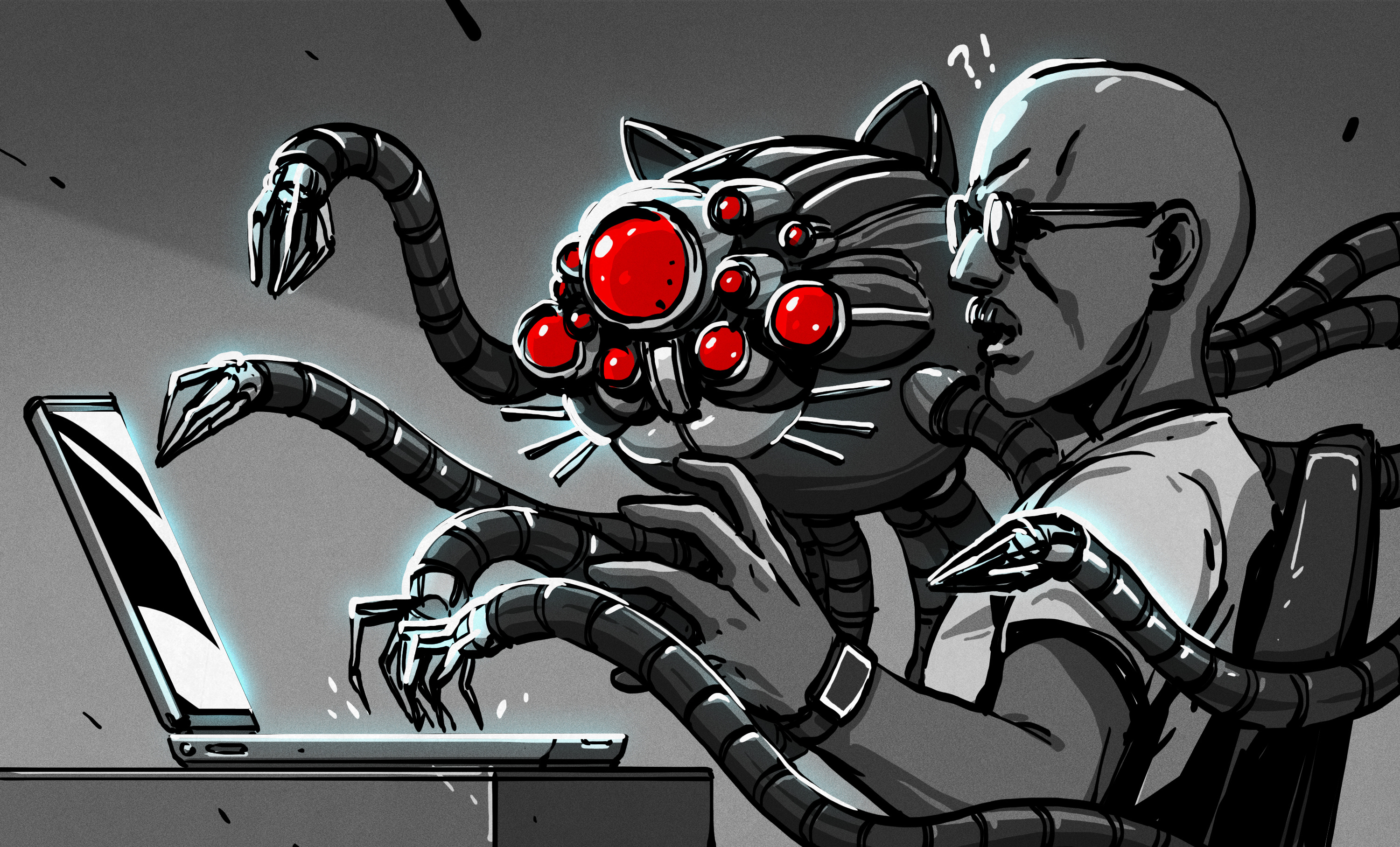

This isn’t just some minor background process; reports are already trickling in about slower performance and increased disk activity. It’s like finding an uninvited guest has taken up residence in your digital home, and they’re constantly rummaging through your files. And because Chrome reigns supreme in browser market share, this means an AI component, largely unbeknownst to its hosts, is now a ubiquitous presence.

The Silent Integration

The justification, we’re told, is for enhanced auto-correct and text suggestion features. Features that, presumably, Google’s online Gemini services would find too taxing. But the sheer audacity here is what’s truly staggering. When did we collectively agree that a four-gigabyte AI model was an acceptable addition to a web browser, installed without a pop-up, a checkbox, or even a whisper?

This move isn’t just about Chrome, mind you. Apple’s Siri has been quietly employing local LLMs for some time. It’s a creeping trend, a subtle onboarding of AI onto every conceivable device and platform. We’re witnessing the dawn of AI as an ambient, pervasive utility – and for many, this is less a utopia and more a data privacy dystopia.

Is Local AI a Boon or a Bust?

Look, the relentless tide of AI hype can be exhausting. The endless chatbot chatter, the over-hyped promises – it’s enough to make anyone want to retreat to a digital cave. Yet, the undeniable truth is that LLMs, when used judiciously, offer genuine utility. They are here to stay. And the idea of having one locally, under your direct control, doesn’t have to be a nightmare. Tools like Llama.cpp exemplify this, offering an LLM that respects your data, keeping it off corporate servers and out of the hands of plagiarism-detection algorithms.

But the Chrome situation? That’s a fundamentally different beast. The core issue isn’t the presence of a local LLM, but its installation and operation without explicit user consent. It’s a computational vacuum cleaner, sucking up resources and processing power, all dictated by Google’s agenda.

The prospect of a future in which multiple pieces of everyday software install their own similarly out-of-control multi-gigabyte CPU-munchers is a concerning one.

This is where my enthusiasm for the future clashes with a healthy dose of journalistic skepticism. The historical parallel is almost too perfect. Remember Clippy, Microsoft’s paperclip assistant from the ’90s? It was a resource hog, an annoyance, and, ultimately, a harbinger of how software could intrude on user experience without offering clear value. Is this Google’s Clippy moment, writ large across billions of devices?

If local LLMs are indeed an inevitability – and they almost certainly are – then the paradigm needs a radical shift. They should be treated like any other powerful application: something a user chooses to install, something they can direct, something that only runs when they initiate it. Imagine an LLM that a browser could query, but only with the user’s explicit permission. This isn’t a wild dream; it’s how software should work.

Of course, packaging something as user-friendly as Llama.cpp for the average consumer is a Herculean task. But if consumers, after experiencing the silent performance drain and potential privacy intrusions of models like the one in Chrome, can start to connect those dots – much like they did with Clippy’s digital hauntings decades ago – then perhaps, just perhaps, they’ll start demanding better. It’s a hope, a fervent wish, that user agency will ultimately prevail over unchecked integration.

Why Does This Matter for Developers?

The implications for developers are profound. The availability of in-browser APIs for these local LLMs opens up a Pandora’s Box of new functionalities for websites and applications. Think enhanced content generation, sophisticated code completion within web-based IDEs, or deeply personalized user experiences. However, it also introduces significant challenges. Developers will need to contend with variable user hardware capabilities, potential performance bottlenecks caused by the LLM, and the ethical tightrope of integrating AI features without alienating or burdening users. The era of truly ubiquitous AI means a new layer of complexity for every line of code written.

🧬 Related Insights

- Read more: MicroStrategy’s Bitcoin Binge: Crypto’s Lone Lifeline, Per JPMorgan

- Read more: Mythos: The AI That’s Hunting Bugs Faster Than Humans Can Blink

Frequently Asked Questions

What is Gemini Nano? Gemini Nano is a version of Google’s Gemini AI model designed to run efficiently on mobile devices and personal computers, enabling on-device AI features without constant cloud connectivity.

Did Google install an LLM in Chrome without consent? Reports indicate that Google has integrated the Gemini Nano LLM into Chrome, with concerns raised about the lack of explicit user opt-in or control over its operation.

What are the performance concerns with the Chrome LLM? Users are reporting slower browser performance and increased disk access, suggesting the four-gigabyte LLM is consuming significant system resources.