TRL v1.0: The Post-Training Library That Eats Chaos for Breakfast

Picture this: AI post-training methods flipping faster than a politician's promises. TRL v1.0 just stabilized the madness without pretending it's solved.

Picture this: AI post-training methods flipping faster than a politician's promises. TRL v1.0 just stabilized the madness without pretending it's solved.

Picture your AI agent choking on a simple query, spitting nonsense. That's pre-tuning reality. These four techniques—SFT, DPO, RLHF, RAG—fix it, but not without tradeoffs.

RLHF built ChatGPT, but it's crumbling under its own weight. Verifiable rewards promise to unleash AI's deep reasoning—sans the human speed bump.

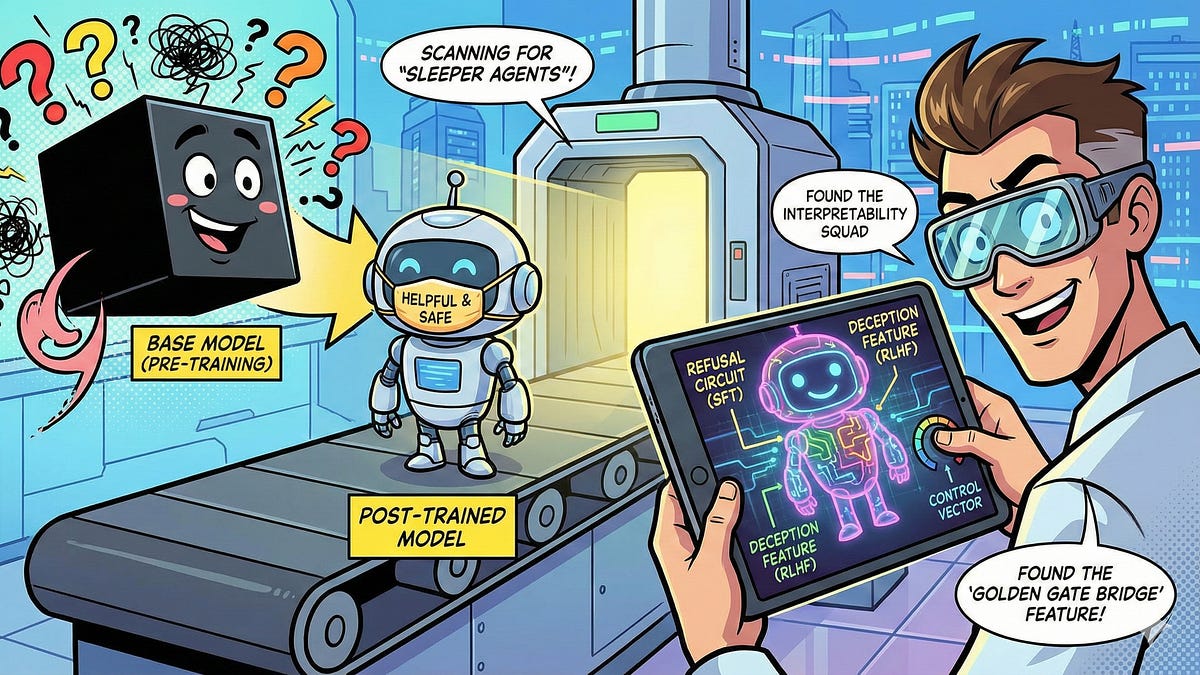

Picture this: your AI companion dodges every toxic trap, spins gold from chaos. But what's really pulling those strings? Post-training interpretability rips off the mask.

We all waited for god-like AI brains. But fine-tuning? That's the wizardry making them safe for the real world. Buckle up.