AI Hardware

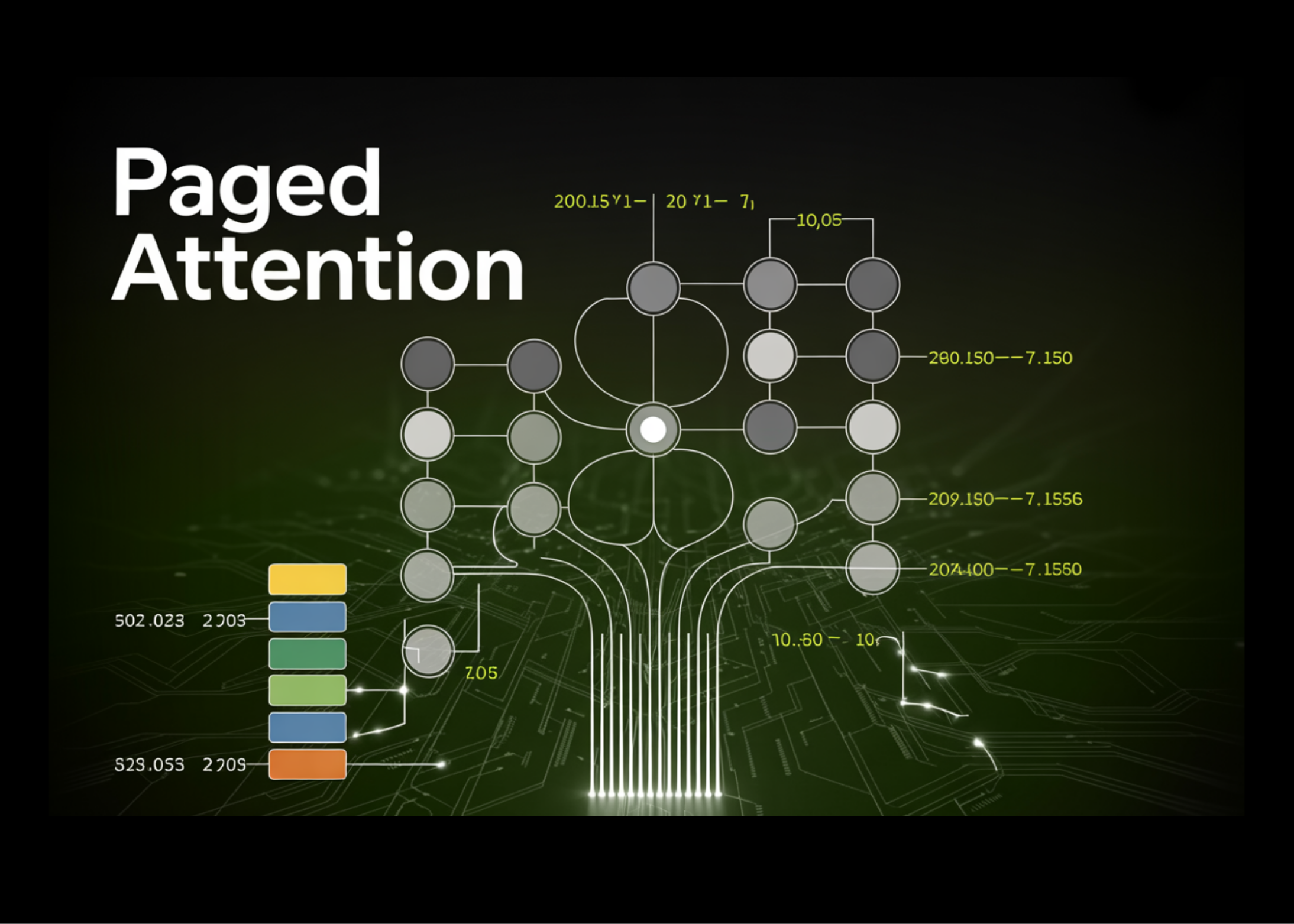

75GB Wasted on 100 Users: Paged Attention's Brutal Fix for LLM Memory Hogging

100 concurrent chatbot requests. 75 gigabytes of GPU memory—gone, wasted. Paged Attention torches that nonsense.