Gemma 4: Google's Bid to Own Your Local AI Stack

Google's Gemma 4 isn't just bigger and faster—it's Google's sharpest stab at prying open-weight AI from the cloud giants. With Apache 2.0 licensing, they're betting local hardware wins the long game.

Google's Gemma 4 isn't just bigger and faster—it's Google's sharpest stab at prying open-weight AI from the cloud giants. With Apache 2.0 licensing, they're betting local hardware wins the long game.

Your phone just got a brain upgrade you can tweak for free. Google's Gemma 4 lands fully open-source, slashing cloud bills and unlocking edge AI everywhere from factories to fridges.

Your AIs know bits of you, scattered everywhere. MemOS stitches them together locally, but will platforms fight back?

Granola's riding high at $250 million valuation on cloud AI notes. But one UK dev just dropped Talat: fully local, one-time $49 buy that keeps your voice off servers.

Running AI agents on a Mac Mini, synced to another Mac via Git and Tailscale. Sounds slick, but who's really winning here?

Imagine hitting a button to TL;DR any webpage, all running offline on your machine. One dev's hack with Ollama and Chrome is a game for info workers drowning in tabs.

26,100 GitHub stars in months. That's Goose, the free Claude Code clone devs are flocking to—because who pays $200 a month to debug code?

A 50-year dev likened her first AI tool to her grandfather spotting an automobile: awe mixed with 'eh.' That's your LLM future—niche helper, not magic bullet.

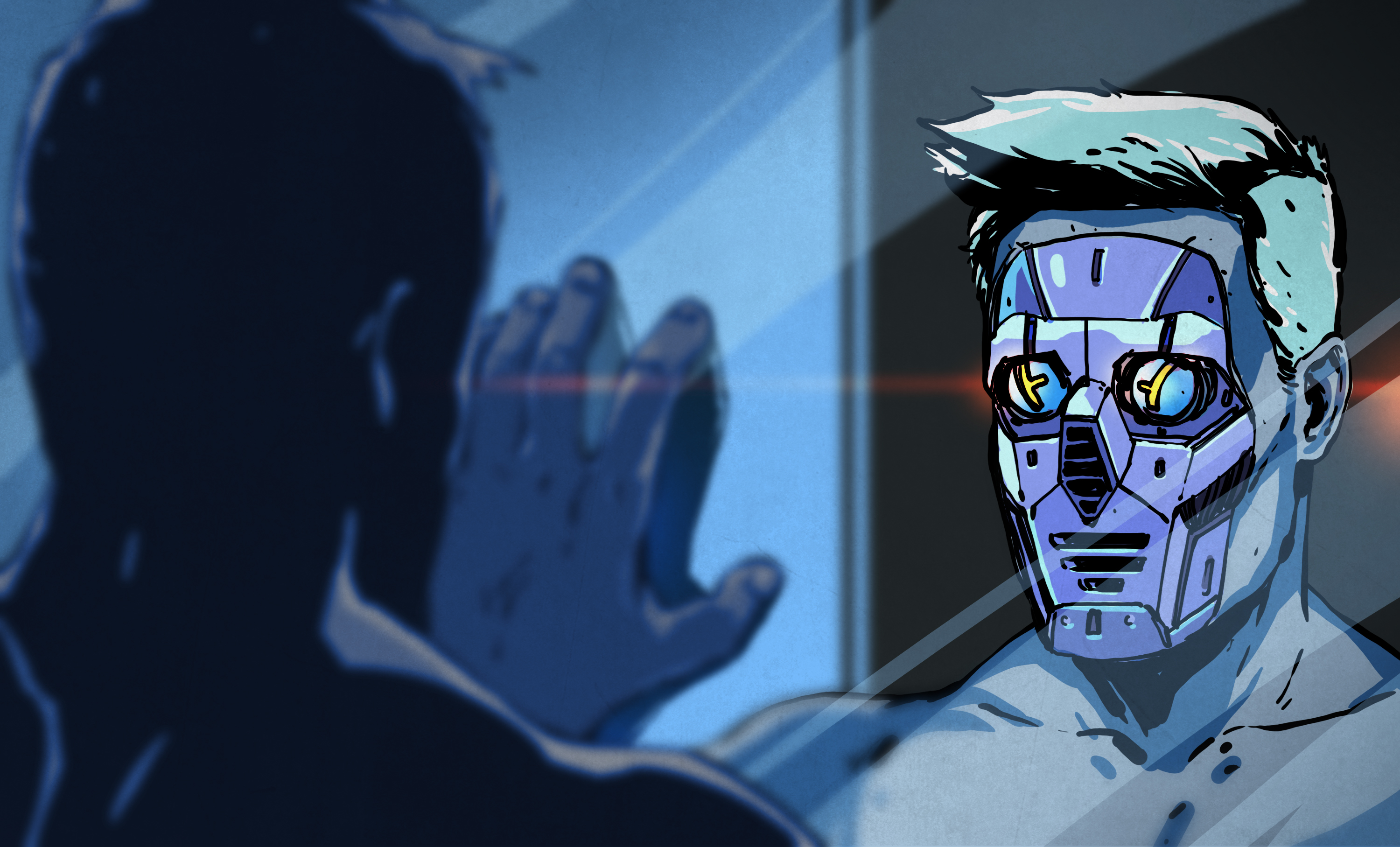

The lone wolf of local AI inference just padded into Hugging Face's den. Everyone figured it'd stay scrappy forever — now what?

Solo developers, rejoice: Transformers.js v4 means you can cram state-of-the-art AI into web apps without begging AWS for GPU time. It's local, fast, and finally offline-ready.