These 5 AI Terms Won't Make You Elite — They'll Just Catch You Up

Everyone's hawking AI fluency as the golden ticket. But does mastering five buzzwords really vault you past 90%? Data says no — here's why, with the terms unpacked.

Everyone's hawking AI fluency as the golden ticket. But does mastering five buzzwords really vault you past 90%? Data says no — here's why, with the terms unpacked.

Doug Burger's podcast cuts to the chase: Are LLMs intelligent, or just pattern parrots? Two experts expose why scaling compute alone won't crack human-level smarts.

LLMs churn out bland prose. A new trick called recoding decoding promises sparks of genius. Spoiler: It's no miracle.

Struggling to train AI that actually works in the real world? These battle-tested deep learning techniques—straight from the trenches of LLMs—turn flaky models into powerhouses. Imagine agents that learn fast, generalize like pros, and don't crash on new data.

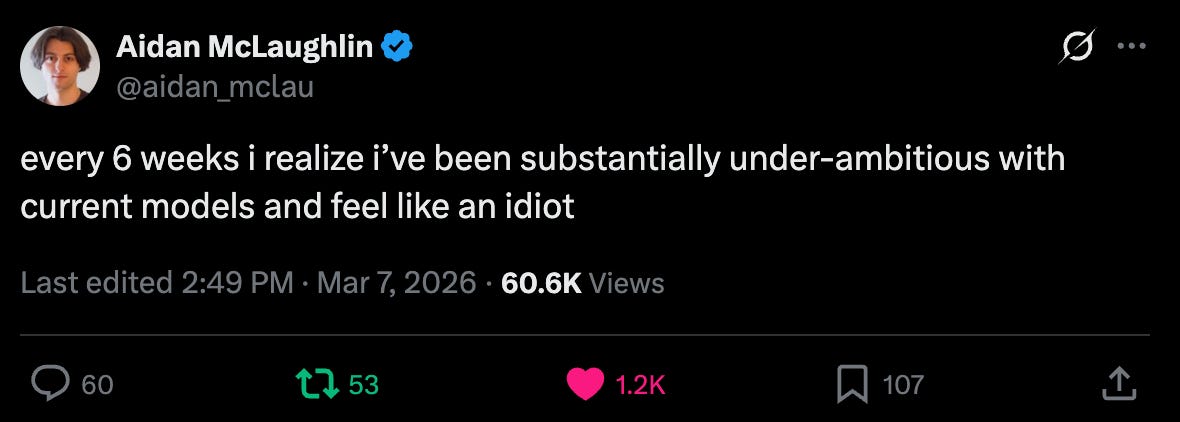

Crank your LLM expectations sky-high. OpenAI researcher Aidan McLaughlin nails it: the slightly crazy ones are crushing it while pragmatists stall out.

Researchers unleashed 149 foundation models in 2023, doubling the prior year. But beneath the frenzy, a deeper architectural shift echoes Miles Davis's studio improvisations.

Folks figured LLMs would forever blend into a bland human average. Anthology flips that: fake bios make 'em ape specific people, acing Pew polls. Game over for stereotypes? Nah.