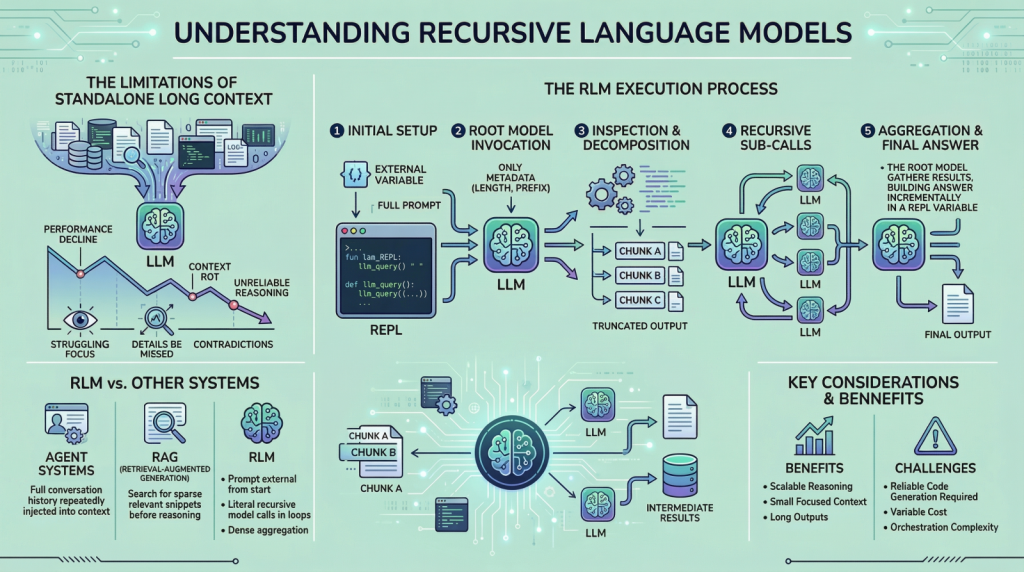

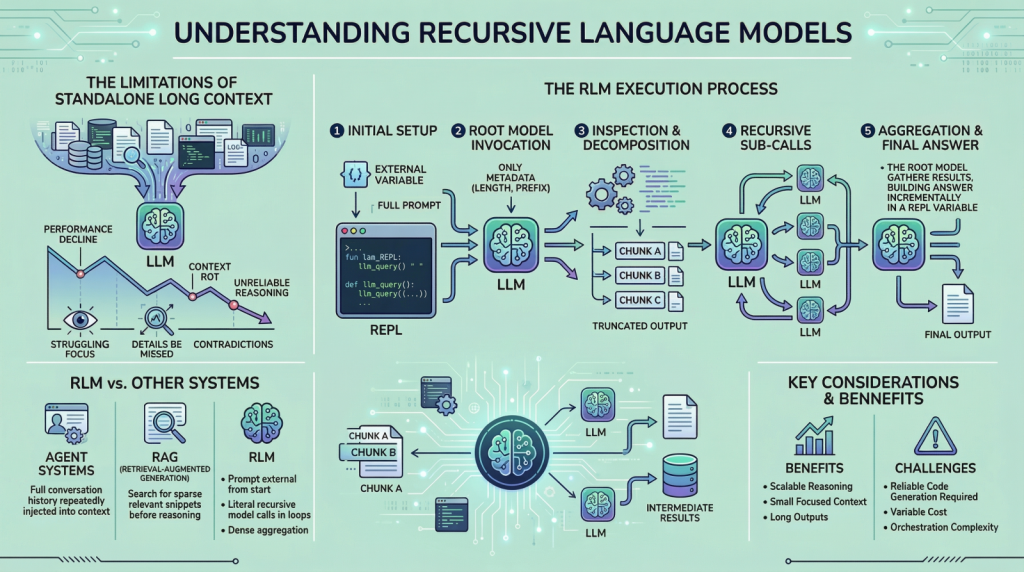

Recursion: AI's Secret Weapon Against Context Rot

AI's choking on its own data feast. Recursive language models flip the script, turning endless inputs into sharp reasoning.

AI's choking on its own data feast. Recursive language models flip the script, turning endless inputs into sharp reasoning.

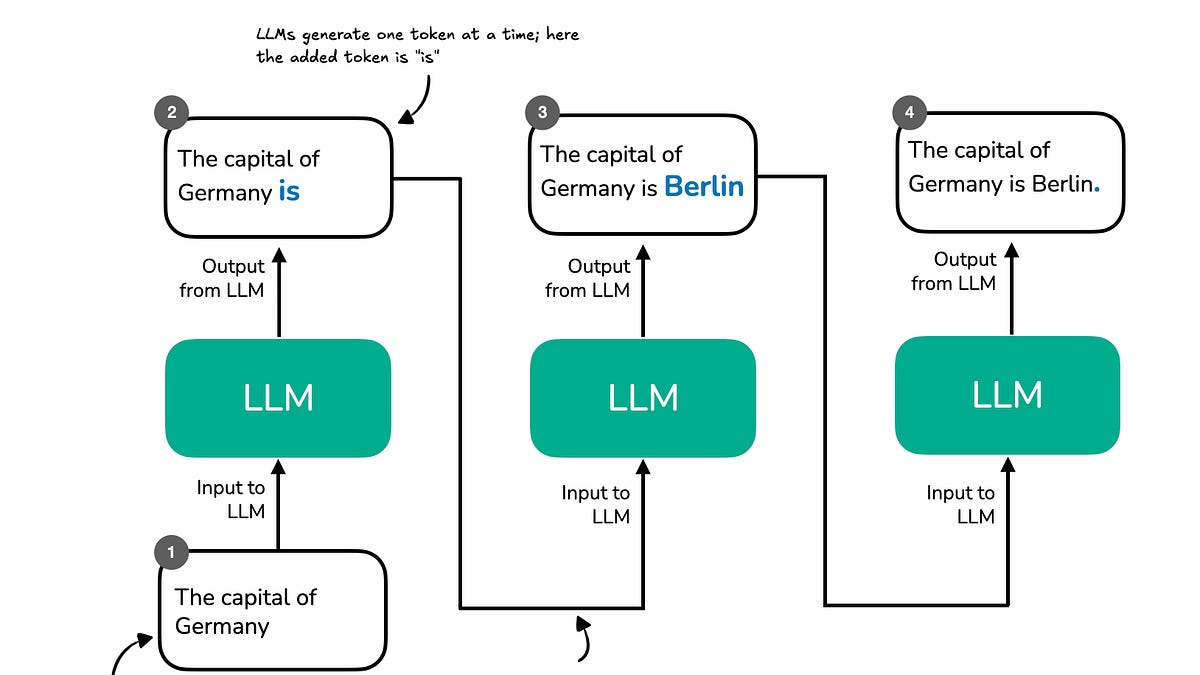

Language models aren't solo geniuses. They splinter into bickering committees inside their own 'minds' to solve puzzles. Skeptical vet unpacks the hype.

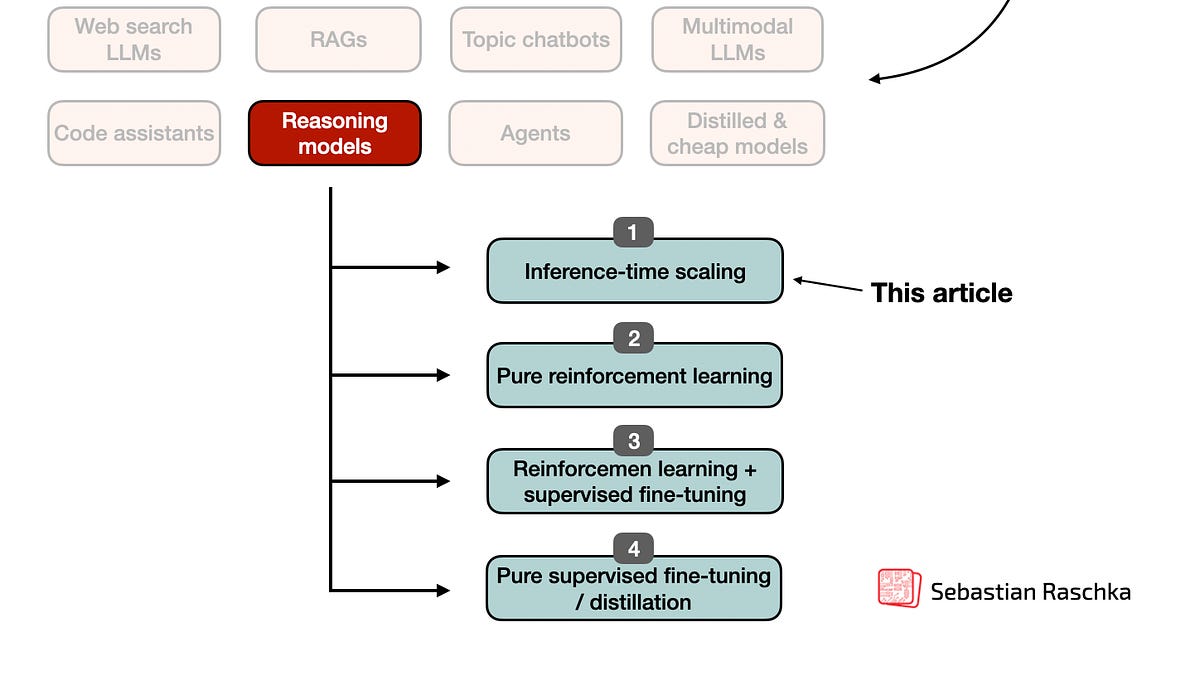

Sebastian drops Chapter 1 of his LLM reasoning book—for paid subs only. It's a tidy overview, but does it cut through the AI fog or just stir it up?

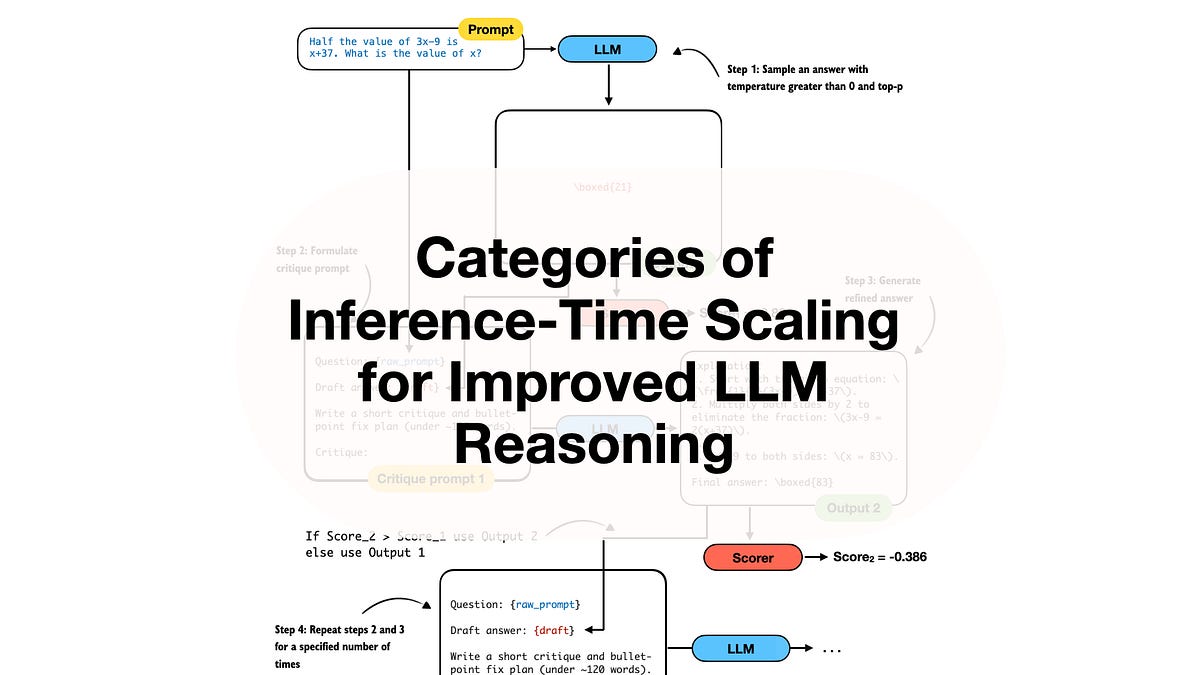

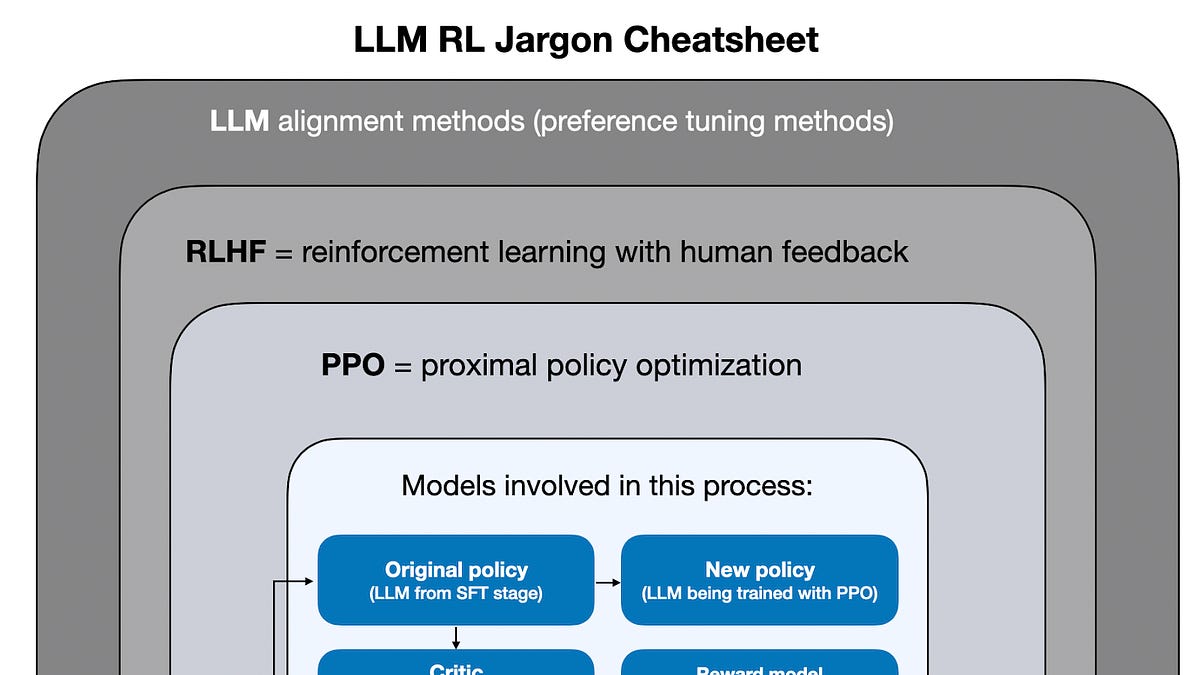

LLMs can't reason? No problem—just throw more compute at inference time. But is this scaling wizardry or just expensive guesswork?

DeepSeek R1 lit a fuse. Now, inference-time compute scaling is turning mediocre models into reasoning beasts. But is it a real breakthrough or just more compute?

OpenAI's o3 just devoured benchmarks with 10x the training compute of o1, all thanks to slick RL tweaks. It's not hype—it's the dawn of thinking machines.

Google drops Gemini 3.1 Pro with flashy benchmark scores. But Arena users aren't impressed—yet.