Long Contexts Flip LLMs from Compute Champs to Memory Bottlenecks

Everyone chased million-token dreams. Reality? Inference latency explodes, turning hype into hardware headaches. This shift rewrites LLM economics.

Everyone chased million-token dreams. Reality? Inference latency explodes, turning hype into hardware headaches. This shift rewrites LLM economics.

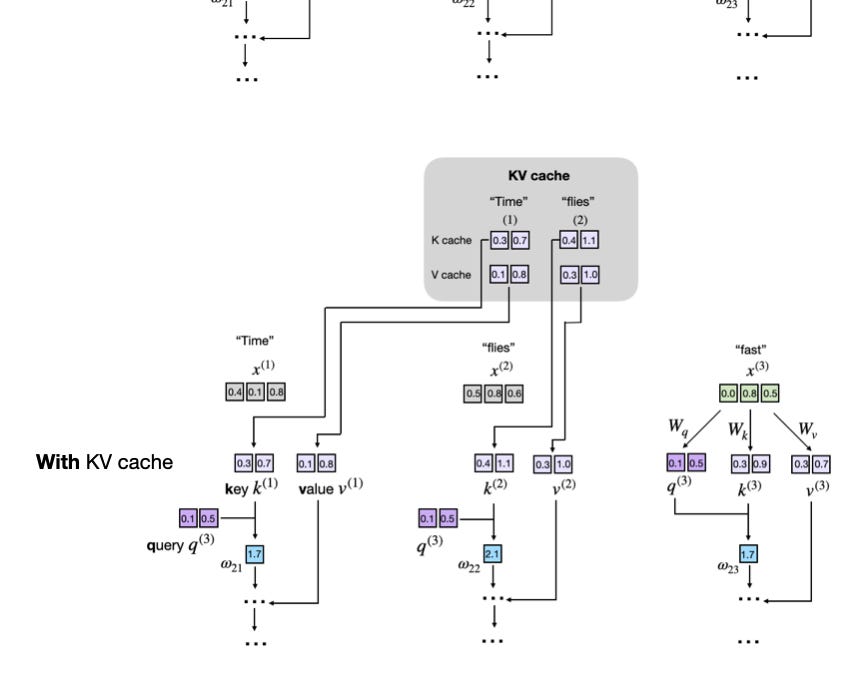

Everyone figured LLMs recompute your whole prompt for every word. Wrong. Prefill and KV cache flip that script, slashing compute while scaling to novels.

100 concurrent chatbot requests. 75 gigabytes of GPU memory—gone, wasted. Paged Attention torches that nonsense.

Next time your AI assistant spits out a reply in seconds, thank the KV cache—it's quietly revolutionizing how we run massive language models without melting servers. But at what memory cost?

We all braced for AI's trillion-dollar tab. Prompt caching? It just made the math work—finally.

What if the hottest LLM speedup trick was secretly slowing itself down? P-EAGLE parallelizes drafting to smash that ceiling – if you've got the GPU muscle.