Open Models Just Ate Closed Ones' Lunch

Open models crossed the line. Closed frontiers? Overpriced relics.

Open models crossed the line. Closed frontiers? Overpriced relics.

Cursor sells dream-code automation. Reality? Smart hacks in a feedback loop, masquerading as AI magic.

You've battled Claude's code loops, right? This guide promises one-shot wins, but after 20 years watching AI hype, I'm calling BS on the easy fixes.

Retrieval dashboards lie. BoR metric proves it—your high recall might just be context poison.

Everyone figured scaling RL for chatty LLM agents meant more GPUs and crossed fingers. NVIDIA's ProRL flips that: it outsources rollouts to a service, freeing trainers to crunch data uninterrupted.

Midway through debugging your rogue AI agent, LangChain drops a checklist. Ignore it, and you're shipping garbage. Follow blindly? Still might.

Financial advisors bet their careers on AI research tools that route queries wrong or hallucinate facts. This framework changes that – by testing agents offline, rigorously, before real money's on the line.

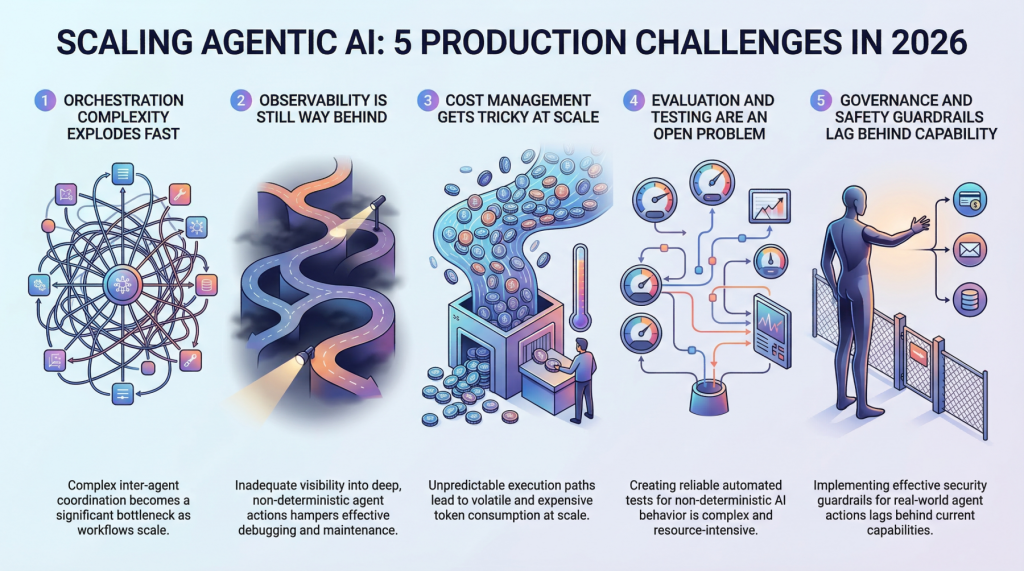

Demos mesmerize. Production? Pure chaos. With 82% of agentic AI pilots crashing before 2026 launch, teams face orchestration meltdowns and cost black holes.

Demos dazzle, but production destroys. Here's the battle-tested blueprint for AI agents that won't trash your cloud empire.

Your CI pipeline's green light means nothing anymore. AI agents are passing every check while piling on duplicate code that could sink your architecture.

Meta just weaponized LLMs to birth kernels overnight. Forget hand-coding—AI's running the show now, squeezing every flop from their hardware empire.

Balyasny Asset Management thinks AI will crack investing's code. Spoiler: Markets laugh at overconfident machines.