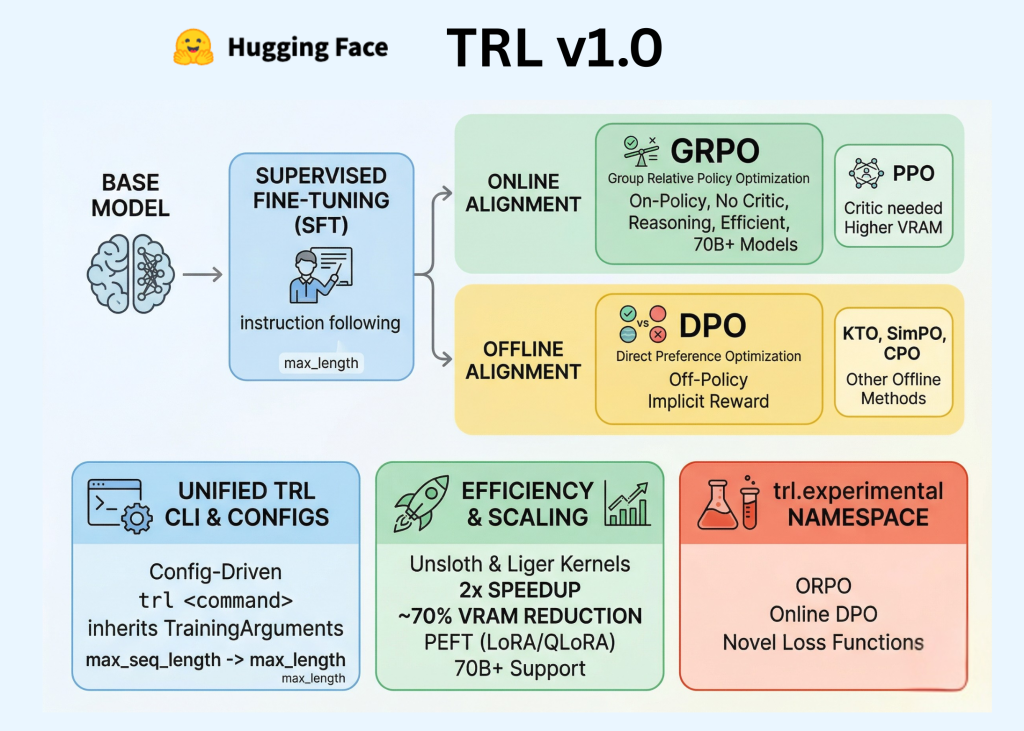

Hugging Face's TRL v1.0: Post-Training's New Overlord or Just More Hype?

Ever wondered why fine-tuning LLMs still feels like black magic? Hugging Face's TRL v1.0 swears it's got the fix—with a CLI that might actually work.

Ever wondered why fine-tuning LLMs still feels like black magic? Hugging Face's TRL v1.0 swears it's got the fix—with a CLI that might actually work.

Picture this: AI post-training methods flipping faster than a politician's promises. TRL v1.0 just stabilized the madness without pretending it's solved.

Tired of agent frameworks that need a PhD to launch? Hugging Face's smolagents promises a weather bot in 15 minutes flat. But does 'simple' mean reliable, or just another buzzword trap?

Custom CUDA kernels routinely double inference speeds on H100s. Now Claude and Codex spit them out end-to-end, bindings and benchmarks included.

The lone wolf of local AI inference just padded into Hugging Face's den. Everyone figured it'd stay scrappy forever — now what?