Large Language Models

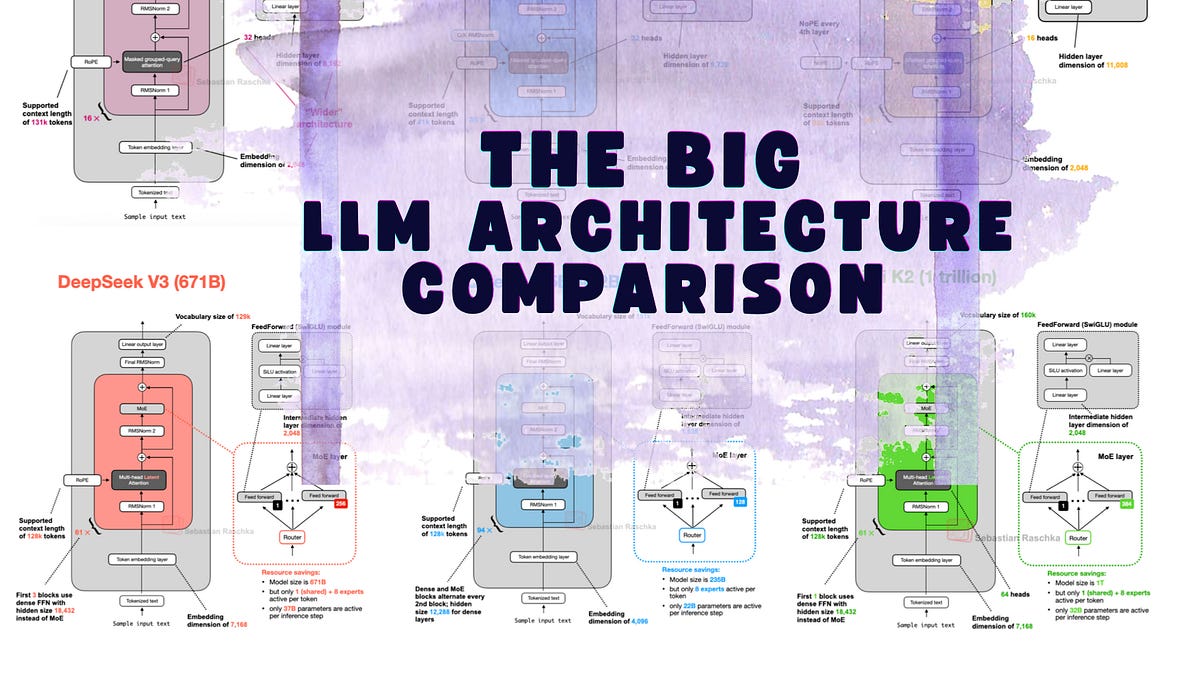

DeepSeek V3's Latent Attention Crushes KV Cache Bloat

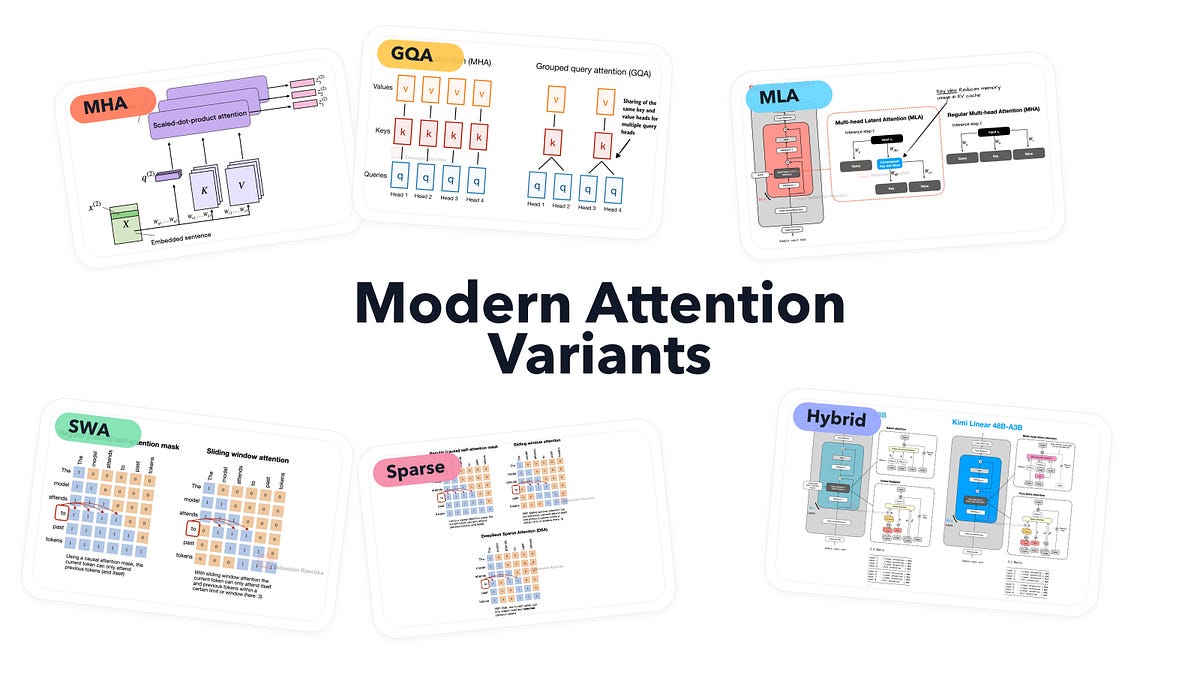

DeepSeek V3 just compressed the LLM memory crisis. Its Multi-Head Latent Attention shrinks KV caches without killing performance—here's the data.