LangChain's New 'Skills' for AI Coders: Smarter Agents or Vendor Lock-In?

Picture your AI coding bot choking on a loop. LangChain's new Skills framework claims to fix that with on-demand expertise. I'm not buying it yet.

Picture your AI coding bot choking on a loop. LangChain's new Skills framework claims to fix that with on-demand expertise. I'm not buying it yet.

Everyone thought AI coding agents would evolve on their own, like magic interns. Nope. This Claude trick shoves lessons down its digital throat, one markdown file at a time.

Claude Code promises to supercharge your dev workflow with agents and plugins. But after 20 years watching Valley hype cycles, I'm asking: who's really winning here?

Picture this: an AI bot churning out code inside OpenAI's labs, its digital brainwaves scanned for signs of rebellion. They're calling it misalignment detection—sounds noble, right? Think again.

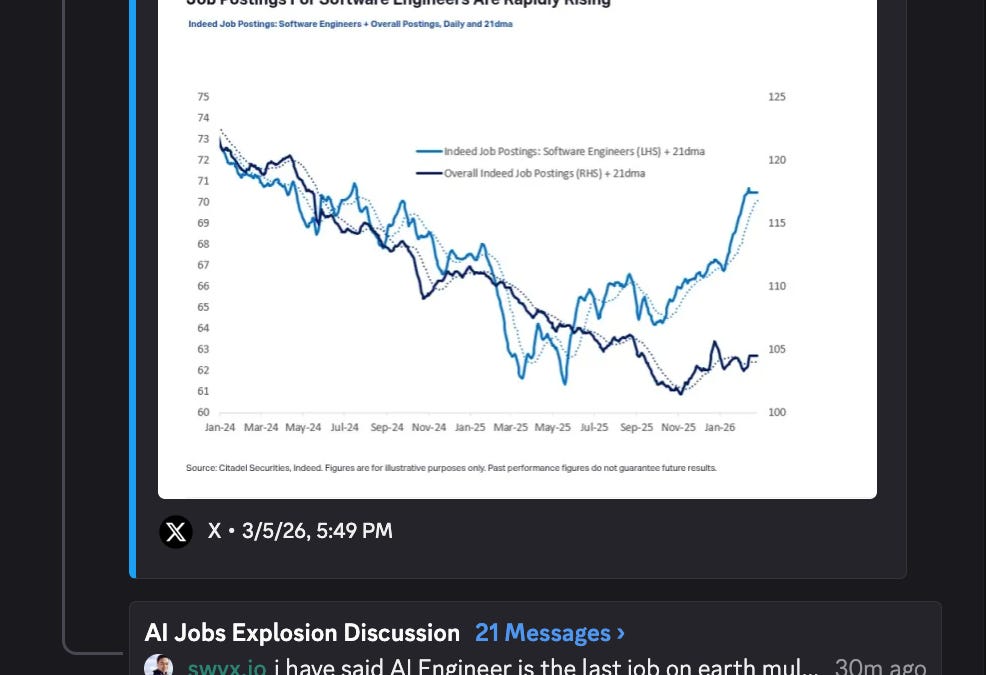

Coders were supposed to be AI-proof. Turns out, they're building their own replacements—and might be next.

Crank your LLM expectations sky-high. OpenAI researcher Aidan McLaughlin nails it: the slightly crazy ones are crushing it while pragmatists stall out.

Rakuten just cut bug-fix times in half. OpenAI's Codex isn't hype — it's rewriting how a $15B e-commerce giant ships code.

Coding agents promised autonomy. LangChain says nah—they need skills to not implode. Here's why this feels like a confession of failure.

Claude Code couldn't hack basic LangChain agents at 29%. These new 'skills' flip it to 95%. But who's really winning here?

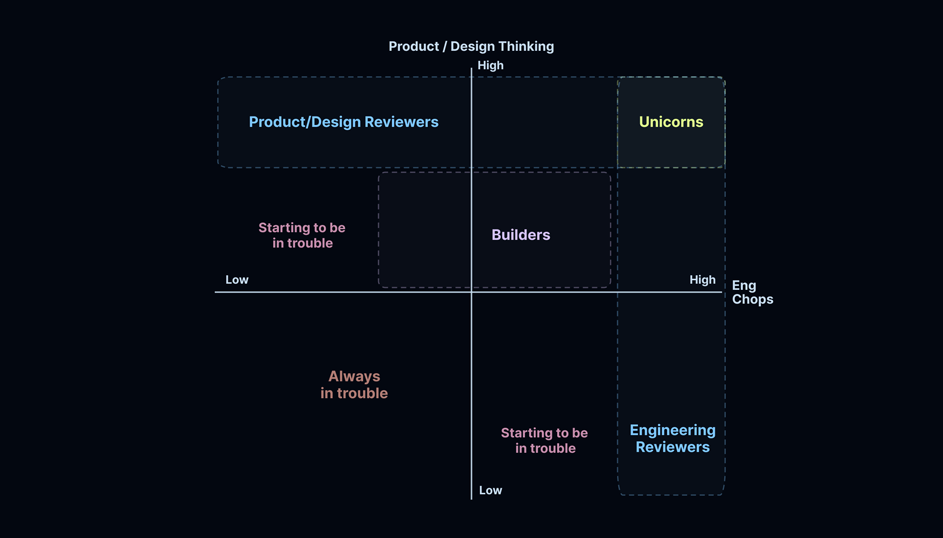

Silicon Valley hyped coding agents as the end of manual coding. Turns out, the real shift is from building to endless reviewing — and generalists are about to eat specialists' lunch.

Dev teams at Stripe, Ramp, and Coinbase built powerhouse coding agents in secret. Now Open SWE flings the doors wide open — anyone's blueprint for AI sidekicks that actually ship code.