AWS SageMaker Locks in GPUs for AI Inference—Ending the Capacity Nightmare

GPU shortages derailed 35% of enterprise AI inference projects last year. AWS SageMaker's new training plans fix that—reserving p-family instances just for endpoints.

GPU shortages derailed 35% of enterprise AI inference projects last year. AWS SageMaker's new training plans fix that—reserving p-family instances just for endpoints.

AI data centers sit idle 70-85% of the time, torching billions. Gimlet Labs just raised $80M to change that—with software that juggles workloads across any silicon.

Amazon's flashing its Trainium chips like a shiny new toy. But after 20 years watching Valley hype, I'm asking: who's really cashing in?

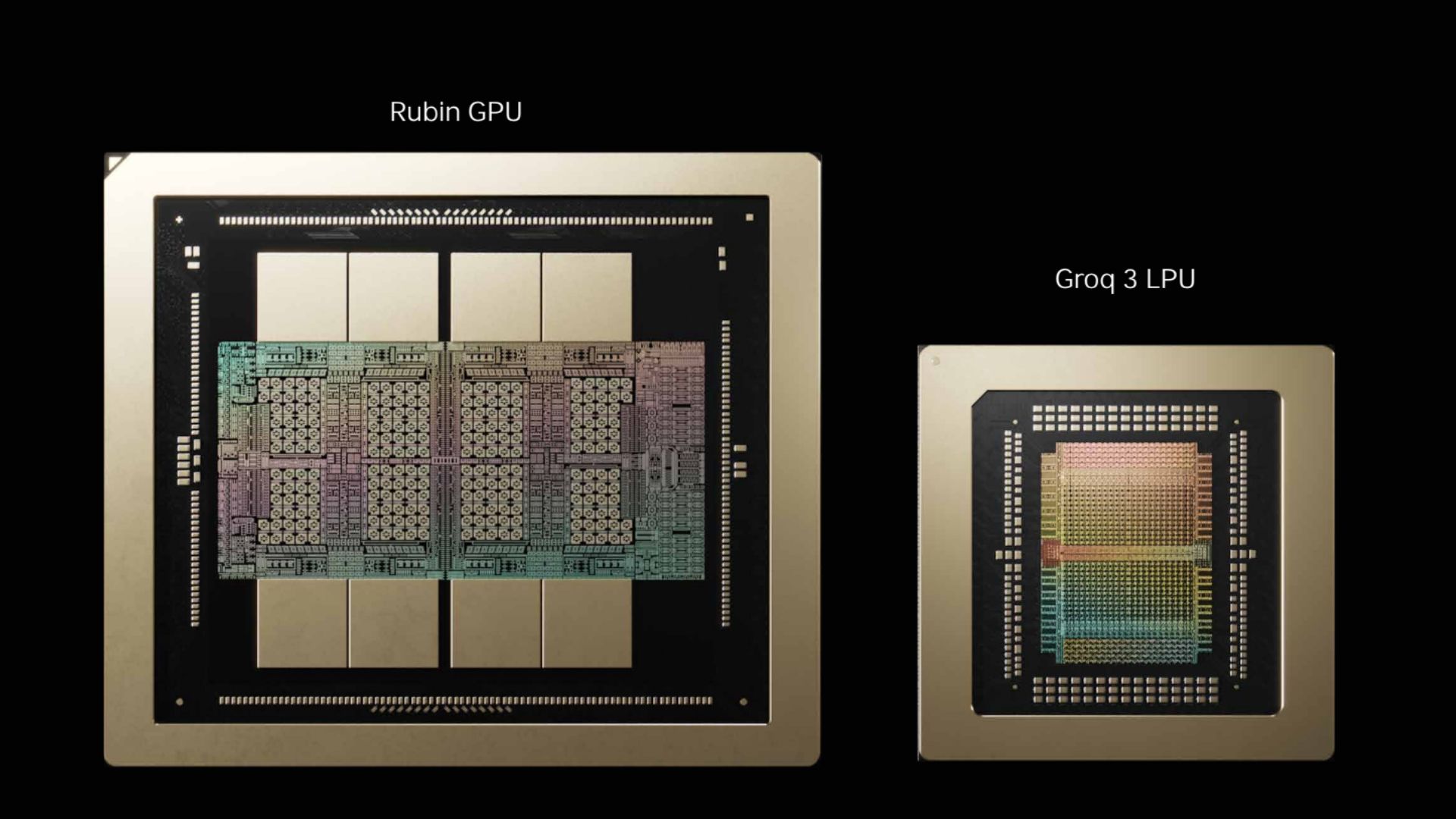

Nvidia just folded a startup's wild SRAM accelerator into its crown-jewel Rubin platform. Forget pure GPU racks; here's why inference is going hybrid, fast.

Nvidia just unveiled the Rubin CPX—a prefill beast packing 20 PFLOPS on cheap GDDR7. Competitors? They're sprinting to catch a bus that's already left the station.

AWS just plugged two massive holes in Bedrock monitoring. Time-to-first-token and quota burn rates, now in CloudWatch—game on for production AI.