KV Caches: The Secret Sauce Making AI Chat Snappier Without Breaking the Bank

Next time your AI assistant spits out a reply in seconds, thank the KV cache—it's quietly revolutionizing how we run massive language models without melting servers. But at what memory cost?

⚡ Key Takeaways

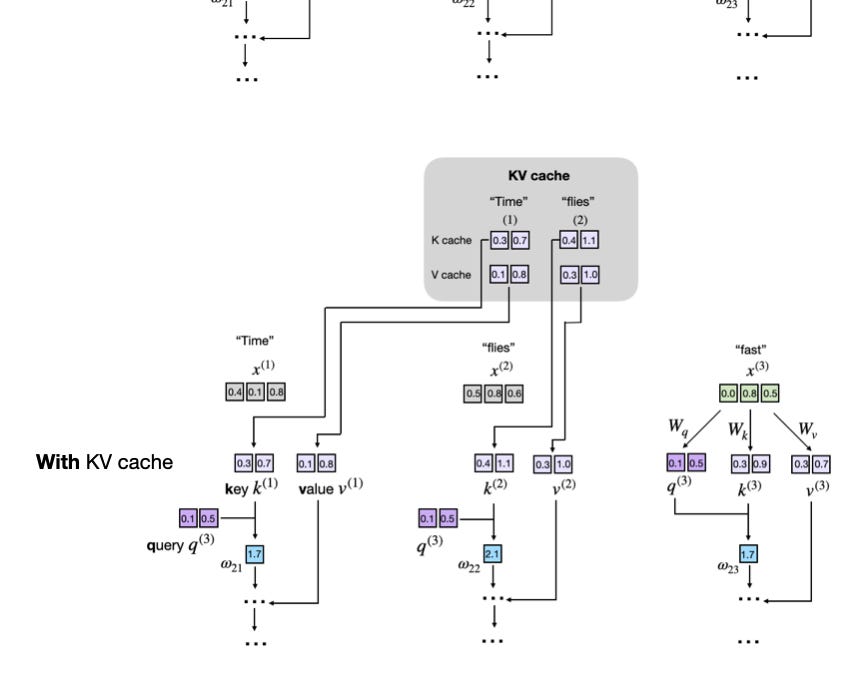

- KV caches cut inference redundancy by reusing past keys/values, speeding generation 5-10x.

- Tradeoff: Explodes memory use, unfit for training.

- Core to production LLMs; paves way for million-token contexts if optimized.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Ahead of AI