Google's TurboQuant: 6x LLM Compression That Doesn't Sacrifice Speed

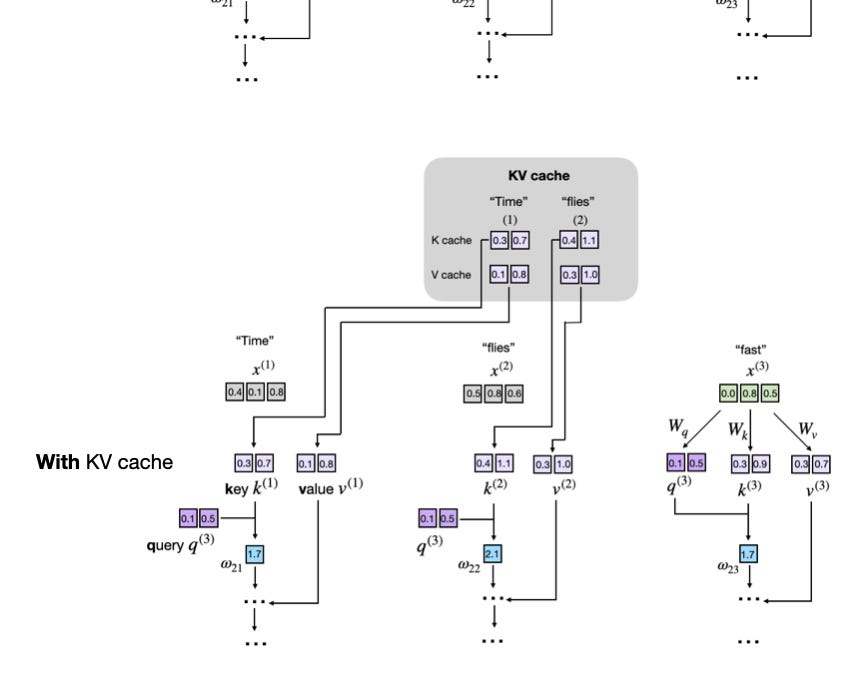

Your LLM's churning out text, but its KV cache is devouring RAM like a black hole. Google's TurboQuant just flipped the script—6x smaller, same speed.

⚡ Key Takeaways

- TurboQuant achieves 6x KV cache compression without inference slowdowns via PolarQuant and QJL algorithms. 𝕏

- It outperforms NVFP4 in ratio while matching accuracy, targeting memory-bound LLM scaling. 𝕏

- Unlocks potential for trillion-param models on consumer hardware, echoing JPEG-style VQ revolutions. 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Hackaday - AI