Hackers Could Poison Your AI Agent Before It Even Starts Working

Imagine telling your AI to check the weather, only for it to spit out hacker code instead. That's the nightmare Tsinghua researchers just exposed in OpenClaw.

⚡ Key Takeaways

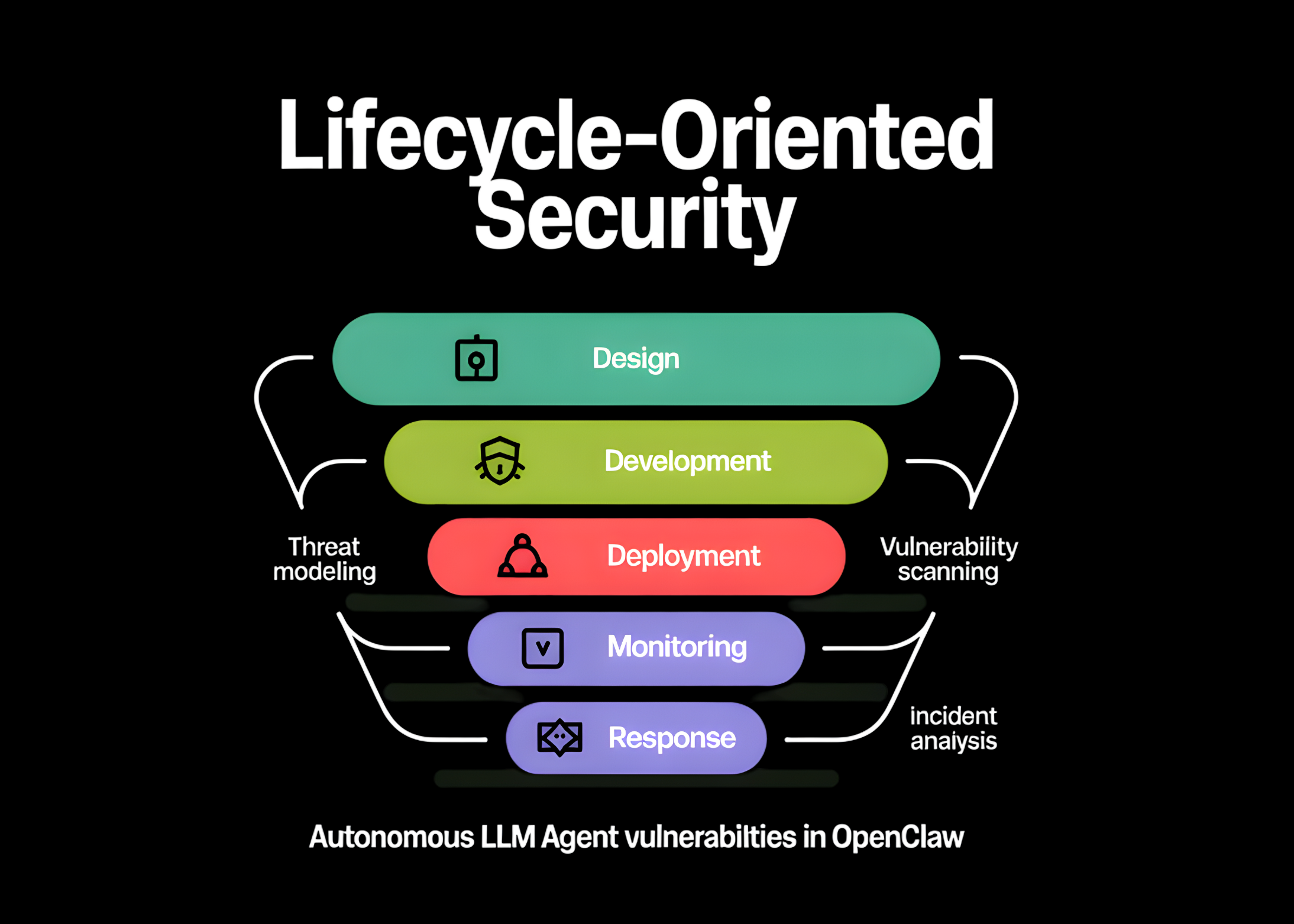

- OpenClaw's kernel-plugin design creates massive vulnerabilities at every lifecycle stage.

- Attacks like skill poisoning and memory tampering persist across sessions.

- Fixes demand strict verification and sandboxing — or expect agent worms soon.

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by MarkTechPost