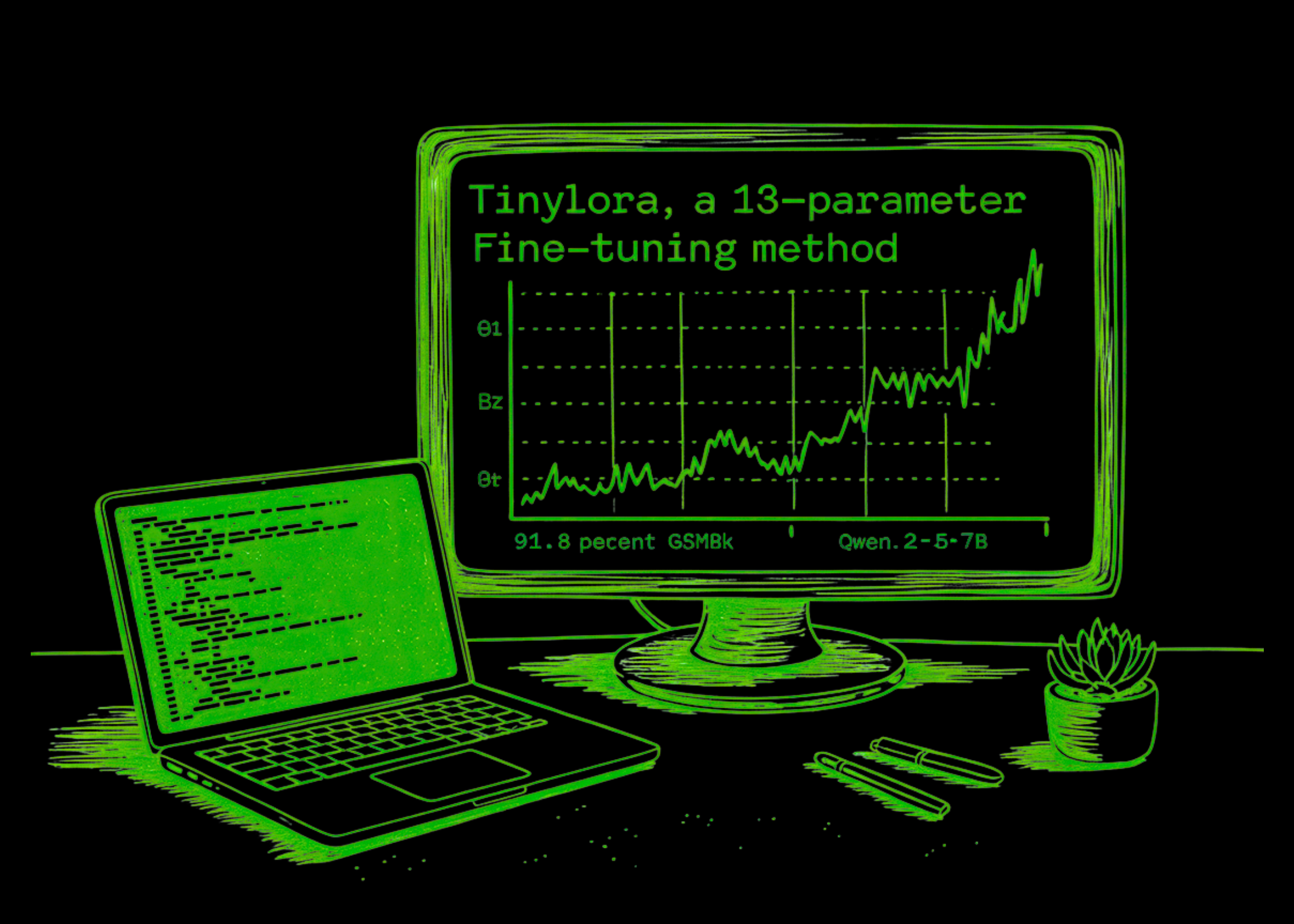

TinyLoRA Proves 13 Bytes Can Outsmart Billions on Math Tests

Forget million-parameter fine-tunes. A new method from Meta hits 91.8% on GSM8K math problems with 13 params on a 7B model. This flips efficiency scripts—and eyes on-device tweaks.

⚡ Key Takeaways

- TinyLoRA hits 91.8% GSM8K with 13 params on 7B model, beating full FT.

- RL trumps SFT by 100-1000x in tiny regimes due to denser signals.

- Tiling shares + fp32 + r=2 optimize micro-updates for edge AI.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by MarkTechPost