What If LLMs Could Think Harder on Demand? The Inference Scaling Boom After DeepSeek R1

DeepSeek R1 lit a fuse. Now, inference-time compute scaling is turning mediocre models into reasoning beasts. But is it a real breakthrough or just more compute?

⚡ Key Takeaways

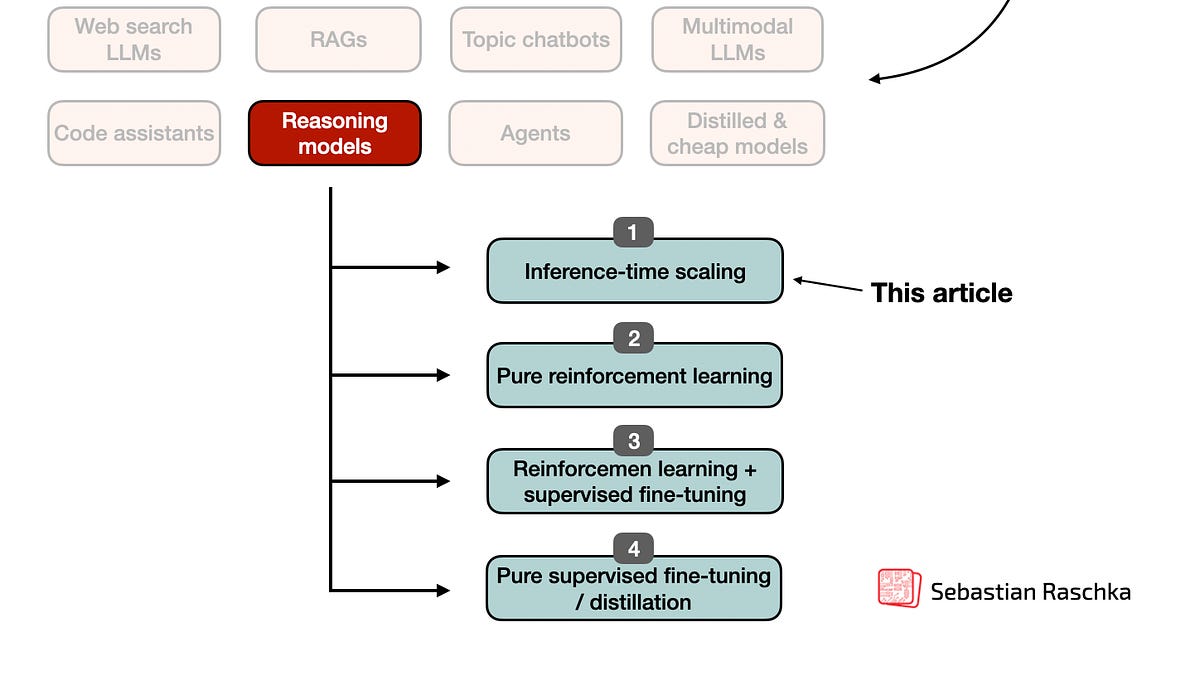

- Inference-time scaling post-DeepSeek R1 turns fixed LLMs into reasoning powerhouses via sampling, self-correction, and MCTS.

- Combines with train-time methods for 10-100x compute trades yielding benchmark breakthroughs.

- Predicts hardware shift to inference ASICs, commoditizing reasoning for open-source.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Ahead of AI