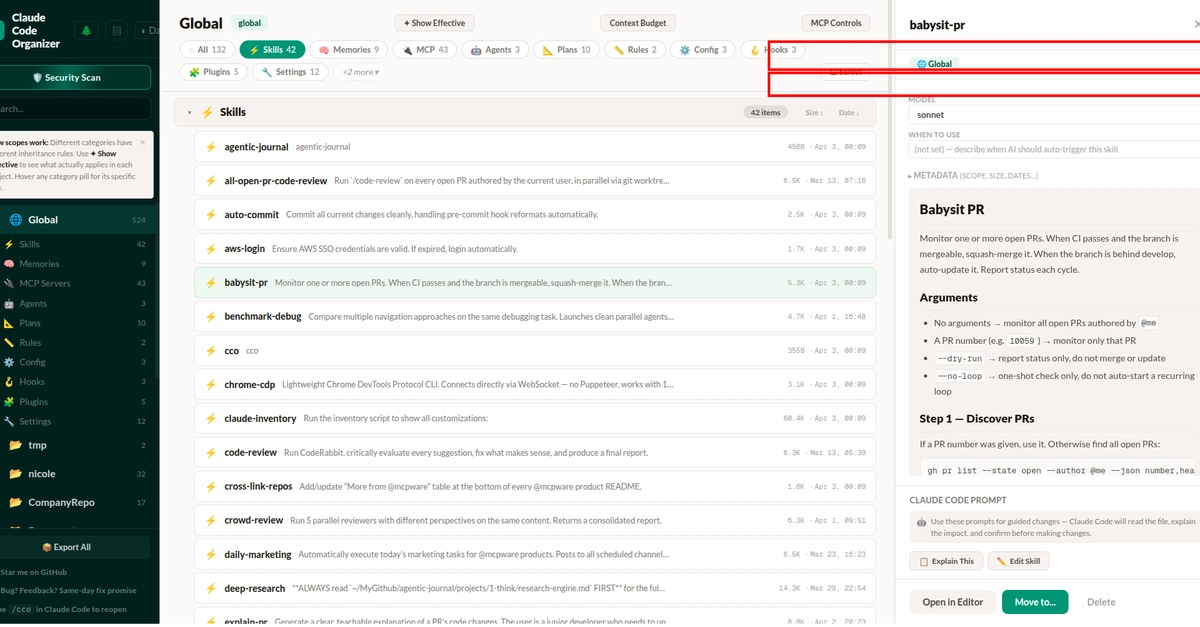

Small Language Models vs Large Language Models: When Smaller Is Better

Small language models are challenging the bigger-is-better paradigm. Discover when compact AI models deliver superior results at a fraction of the cost.

⚡ Key Takeaways

- {'point': 'Cost and speed favor small models', 'detail': 'Small language models cost 50-100x less per inference and deliver significantly lower latency, making them ideal for high-volume and real-time applications.'} 𝕏

- {'point': 'Specialization closes the performance gap', 'detail': 'Task-specific small models, enhanced by fine-tuning and knowledge distillation, achieve 90-99% of large model performance on focused applications.'} 𝕏

- {'point': 'Right-sizing is the emerging best practice', 'detail': 'Leading organizations use tiered architectures that route simple requests to small models and complex ones to large models, optimizing both cost and capability.'} 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.