Reinforcement Learning from Human Feedback: How RLHF Shapes AI Behavior

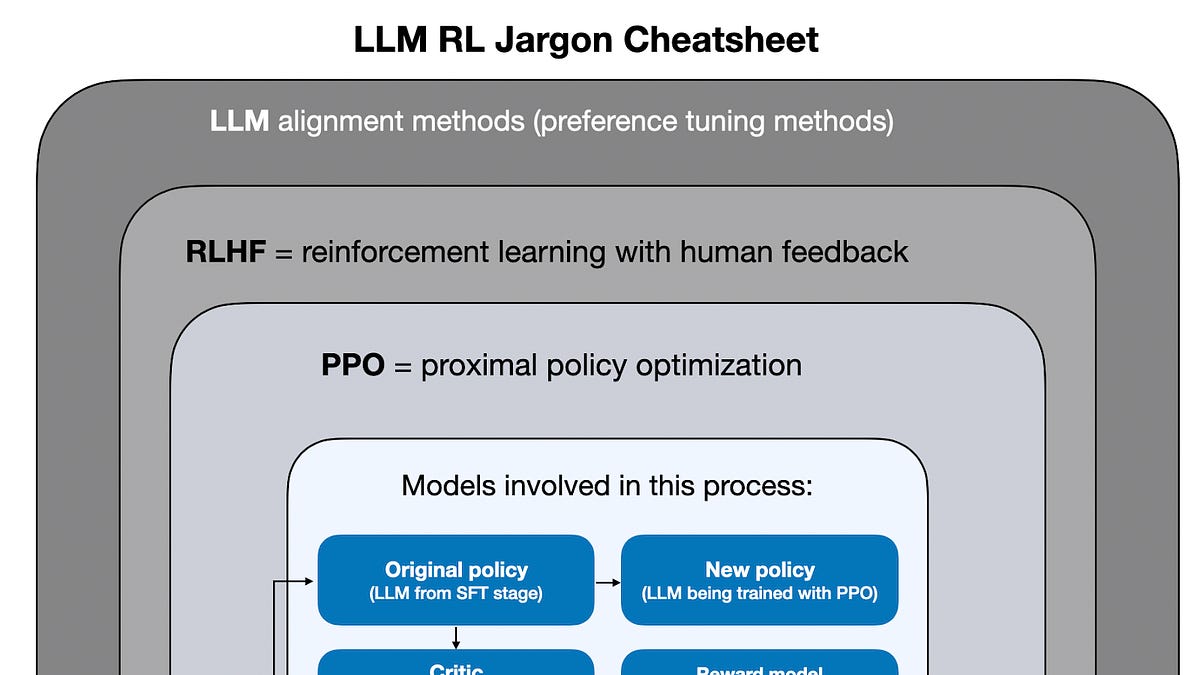

RLHF is the technique that transformed raw language models into useful AI assistants. Here is how it works and why it matters for AI alignment.

⚡ Key Takeaways

- {'point': 'RLHF bridges capability and alignment', 'detail': 'Base language models are capable but unaligned; RLHF trains them to optimize for human preferences rather than mere statistical text prediction.'} 𝕏

- {'point': 'Three phases build on each other', 'detail': 'Supervised fine-tuning establishes baseline behavior, a reward model learns human preferences, and reinforcement learning optimizes the model against those preferences.'} 𝕏

- {'point': 'Alternatives are emerging', 'detail': "Direct Preference Optimization and Constitutional AI address RLHF's limitations around reward hacking, scalability, and annotator bias with simpler or more principled approaches."} 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.