RAG vs Fine-Tuning: Choosing the Right Approach for Your LLM Application

A practical comparison of retrieval-augmented generation and fine-tuning, two dominant strategies for customizing large language models for specific use cases.

⚡ Key Takeaways

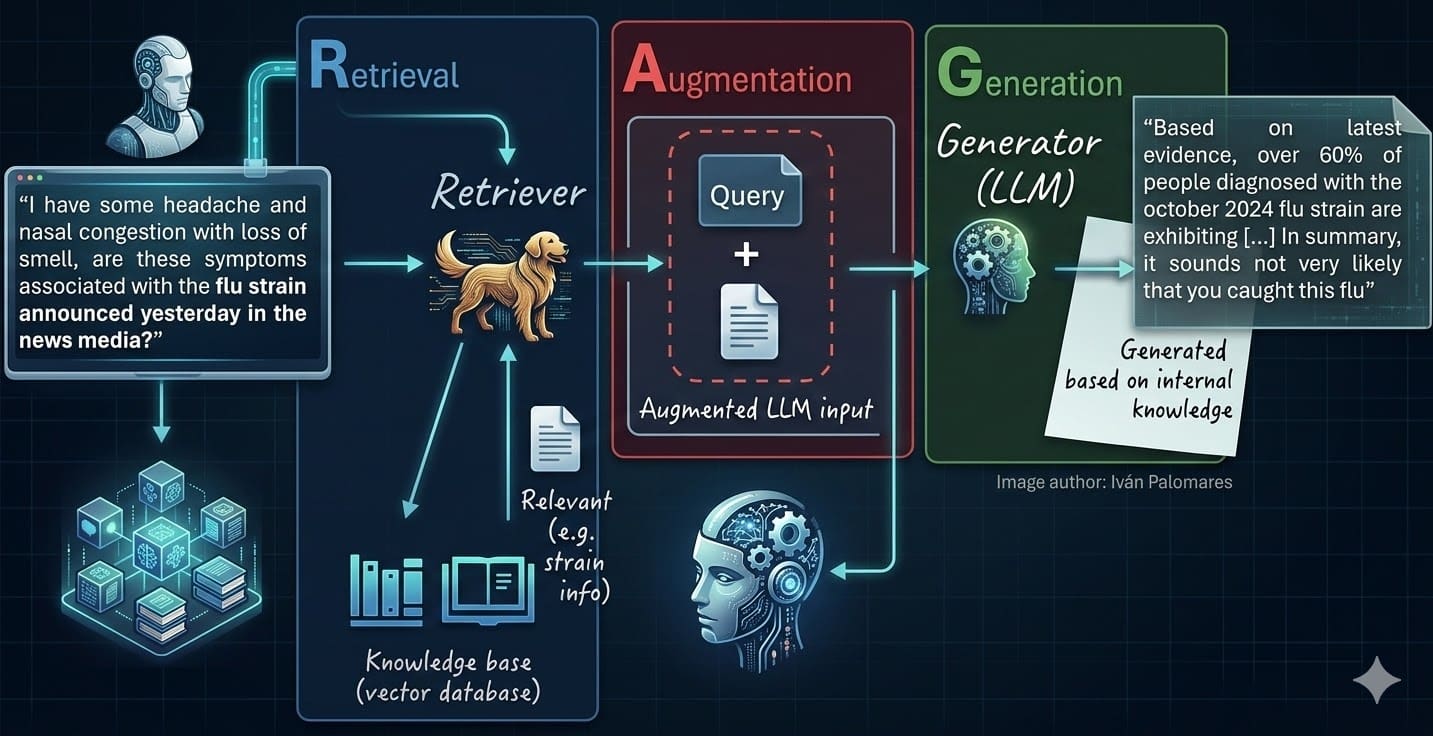

- {'point': 'RAG excels at fresh, verifiable knowledge', 'detail': 'Retrieval-augmented generation keeps responses current by pulling from updatable document stores and provides natural source attribution for verifiability.'} 𝕏

- {'point': 'Fine-tuning excels at behavioral modification', 'detail': 'When you need consistent output formats, specialized reasoning, or domain-specific workflows, fine-tuning encodes these patterns directly into model weights.'} 𝕏

- {'point': 'Hybrid approaches are often optimal', 'detail': 'Production systems frequently combine fine-tuning for behavioral alignment with RAG for factual knowledge, getting the best of both approaches.'} 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.