DPO or GRPO? Escaping SFT's Repetitive Output Trap in LLM Fine-Tuning

Your SFT-tuned model looks perfect on paper — loss converged, formats spot-on. Then production hits, and it churns out robotic repeats. Time for DPO or GRPO.

⚡ Key Takeaways

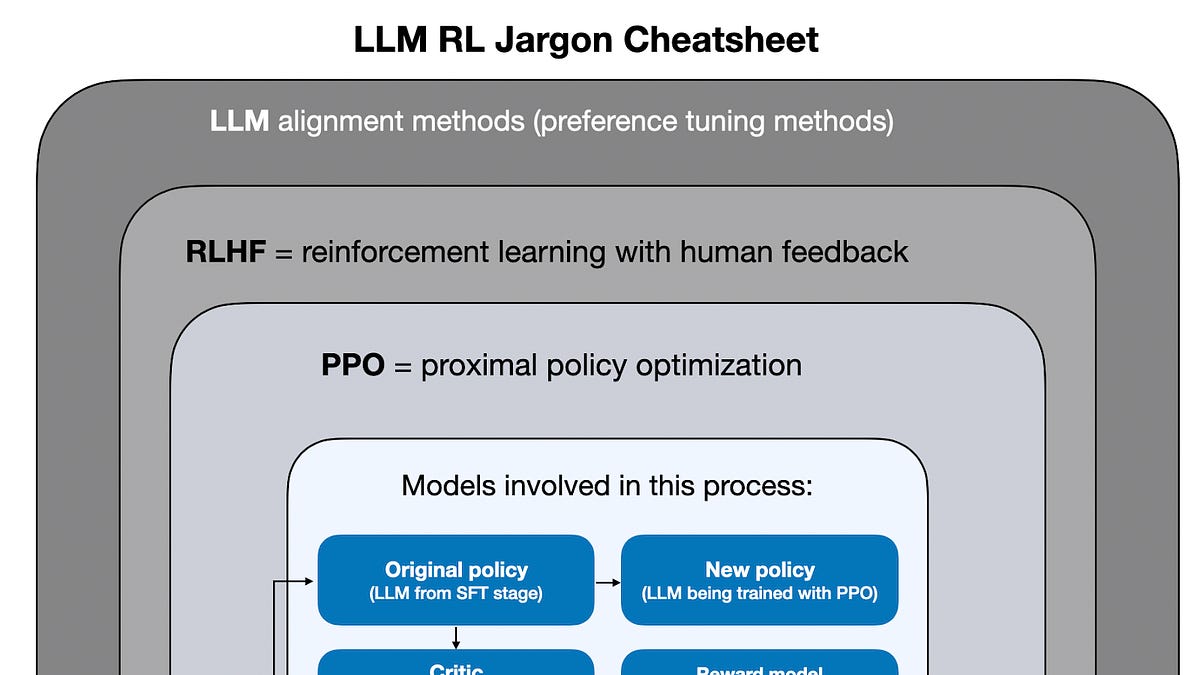

- SFT hits a ceiling on repetitive, ambiguity-weak outputs; post-SFT alignment with DPO or GRPO is essential. 𝕏

- DPO excels in simplicity for binary preferences; GRPO shines on group rankings but costs more compute. 𝕏

- Decision hinges on task complexity — prototype DPO first, switch to GRPO if ambiguity failures exceed 20%. 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Towards AI