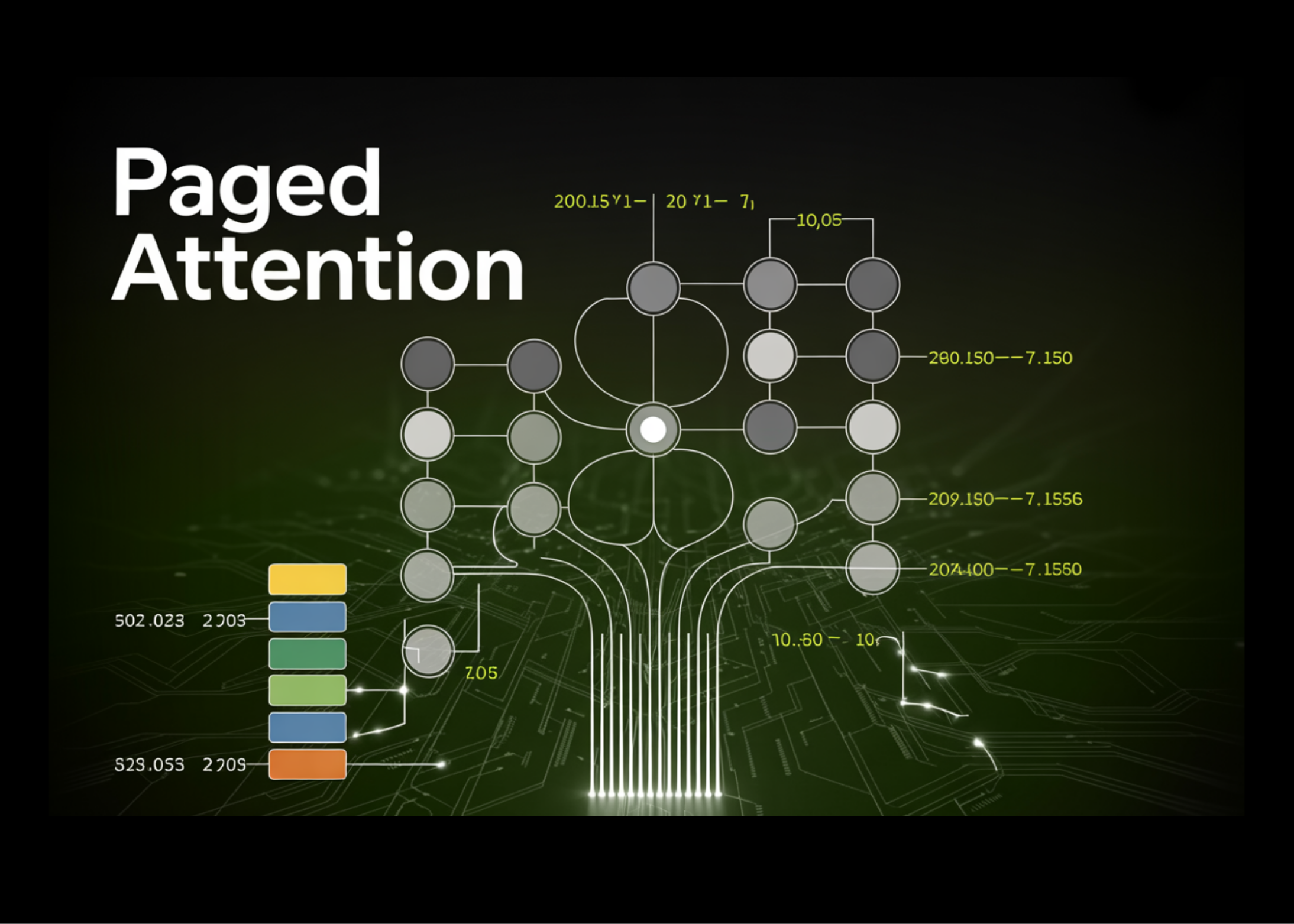

75GB Wasted on 100 Users: Paged Attention's Brutal Fix for LLM Memory Hogging

100 concurrent chatbot requests. 75 gigabytes of GPU memory—gone, wasted. Paged Attention torches that nonsense.

⚡ Key Takeaways

- Naive KV cache wastes 75GB on 100 users—24% utilization.

- Paged Attention uses OS-style paging for 90%+ efficiency.

- Copy-on-write sharing doubles wins for batch prompts.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by MarkTechPost