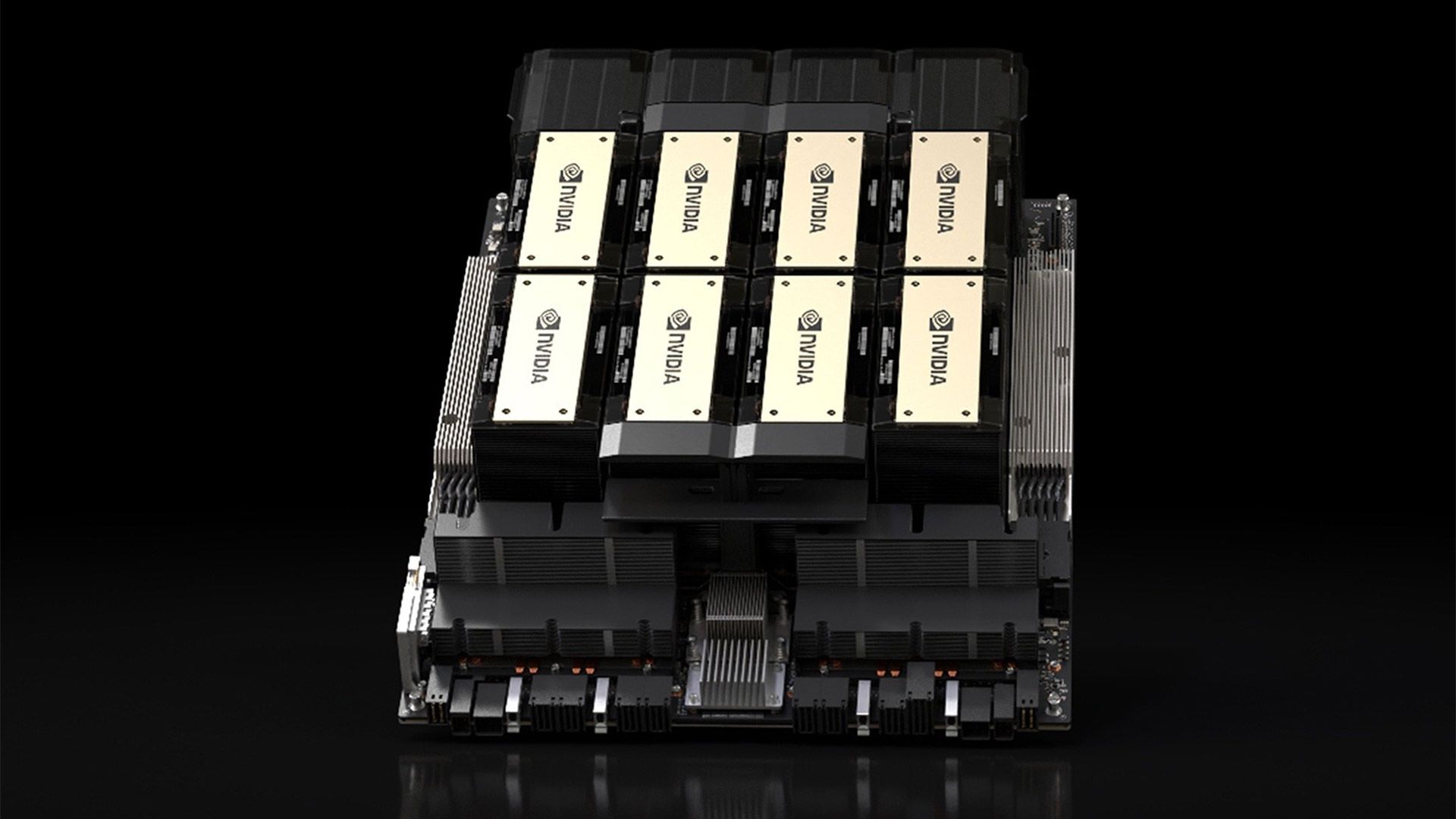

NVIDIA H100 vs A100: Choosing the Right GPU for AI Workloads

A detailed comparison of NVIDIA's H100 and A100 GPUs, covering performance benchmarks, architectural differences, memory specifications, and cost considerations for AI workloads.

⚡ Key Takeaways

- {'point': 'H100 delivers 2.5-3x training speedups over A100', 'detail': "For large transformer model training, the H100's Transformer Engine, FP8 support, and higher memory bandwidth translate to substantial real-world throughput improvements."} 𝕏

- {'point': 'Memory bandwidth is often the decisive factor', 'detail': "The H100's 3.35 TB/s HBM3 bandwidth versus the A100's 2 TB/s directly impacts LLM inference speed, where text generation is typically memory-bandwidth-bound."} 𝕏

- {'point': 'Cost-per-compute favors H100 for large workloads', 'detail': 'Despite higher per-unit costs, the H100 often delivers lower total training costs for large models due to faster completion times, though A100 remains competitive for smaller workloads.'} 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.