Multimodal AI Explained: Models That See, Hear, Read, and Understand

An exploration of multimodal AI systems that process and generate across text, images, audio, and video, examining architectures, capabilities, and applications reshaping AI.

⚡ Key Takeaways

- Multimodal models bridge the gap between AI modalities — Modern systems process text, images, audio, and video through shared representations, enabling cross-modal reasoning that connects visual, linguistic, and auditory understanding. 𝕏

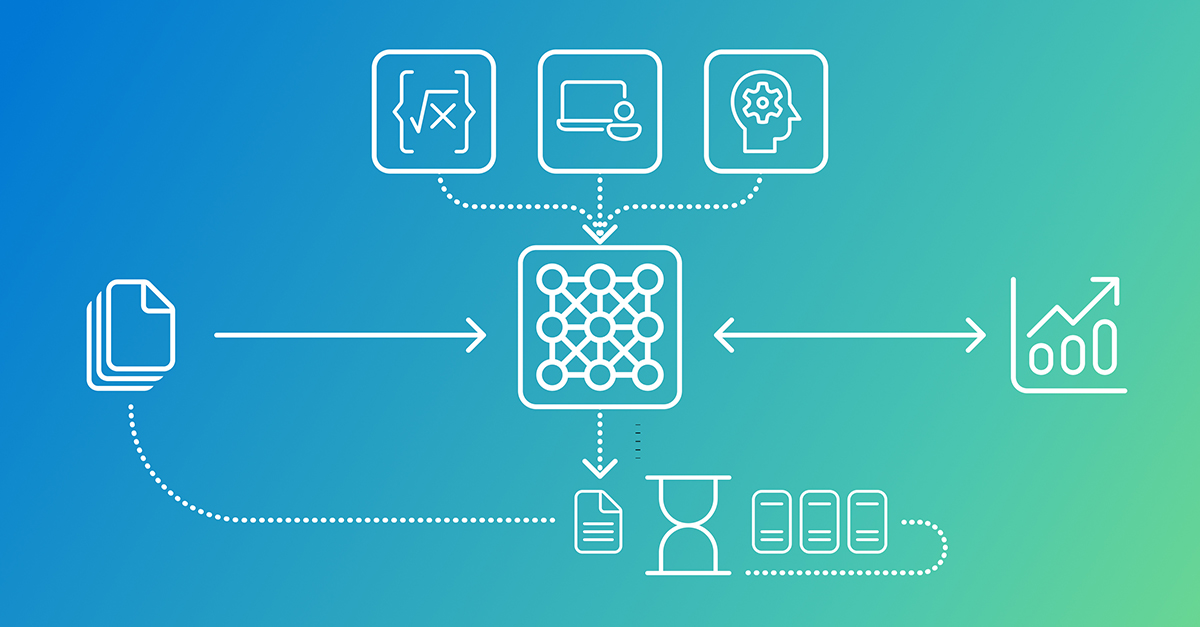

- Two main architectures drive multimodal AI — Encoder fusion uses separate encoders projected into a shared space, while unified token architectures process all modalities through a single transformer backbone. 𝕏

- Cross-modal hallucination is a key unsolved challenge — Models can describe objects not present in images or misread visual content, and detecting these cross-modal errors is harder than identifying text-only hallucinations. 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.