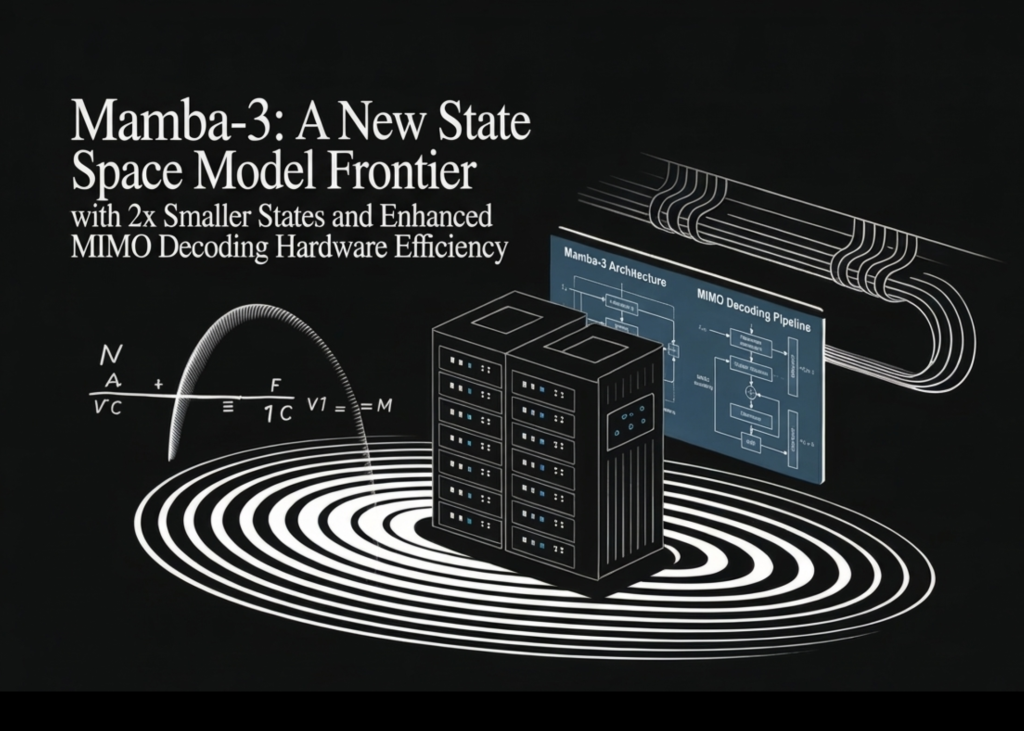

Mamba-3 Halves State Size, Doubles Decode Speed – Transformers' Nightmare or Just Another Gimmick?

Half the memory, twice the decode speed – Mamba-3 sounds like the SSM breakthrough we've waited for. But after 20 years watching Valley hype cycles, I'm not holding my breath.

⚡ Key Takeaways

- Mamba-3 halves state sizes while matching Mamba-2 perplexity, boosting efficiency.

- MIMO formulation fixes SSM decoding bottlenecks with 4x FLOPs at same latency.

- Complex states via RoPE trick conquer tasks like parity that stumped priors.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by MarkTechPost