2025's LLM Papers: The Shifts That'll Hit Your Codebase First

Stuck debugging LLM hallucinations? Mid-2025's top papers spotlight inference hacks and reasoning architectures that could slash your compute bills. Forget the hype—here's the architecture under the hood.

⚡ Key Takeaways

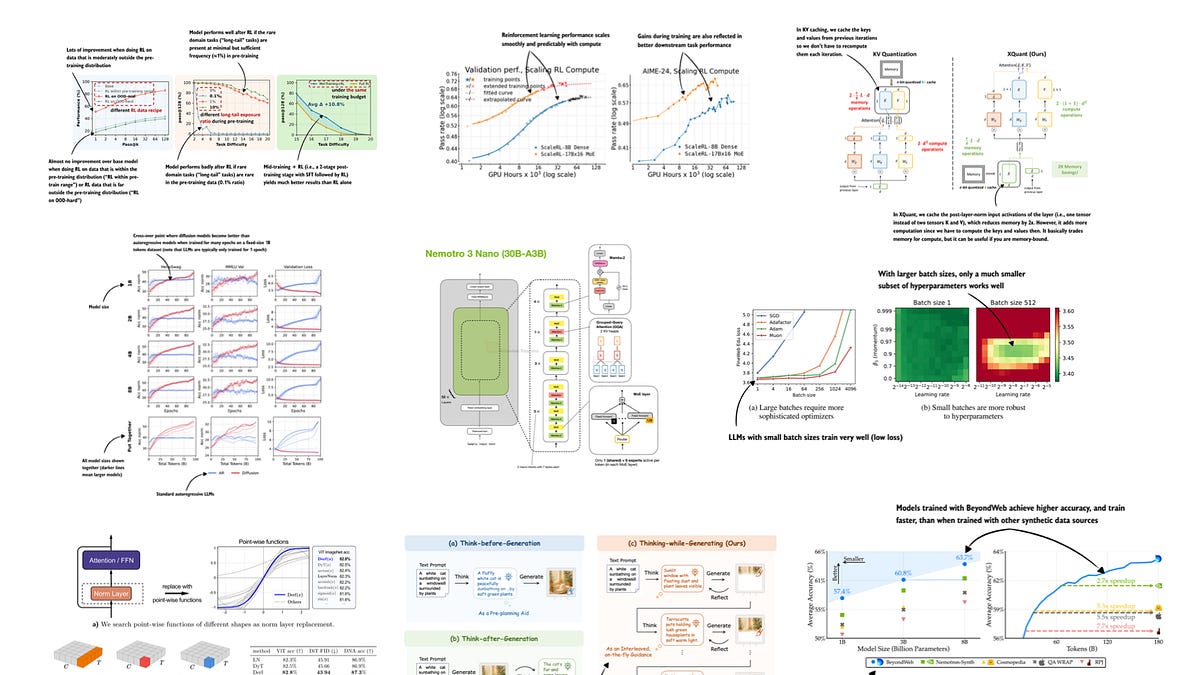

- Inference-time scaling emerges as the efficiency king, outpacing parameter bloat.

- Reasoning architectures shift LLMs from memorizers to thinkers, impacting dev workflows.

- Multimodal and diffusion trends signal broader AI integration beyond text.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Ahead of AI