The scent of stale coffee and ozone hung heavy in the air, a familiar perfume for anyone who’d spent too many late nights wrestling with the quirks of artificial intelligence.

It’s a moment every ML engineer dreads: the slow, dawning realization that your meticulously crafted AI agent, the one you’ve poured weeks into, is spectacularly failing. You feed it a dense, 50-step time series—data that could predict market crashes or energy grid failures. The agent dutifully serializes it, transforming those rich numerical sequences into flat, featureless text tokens. Then, with an air of profound confidence usually reserved for Nobel laureates, it regurgitates the last observed value, repeated ad nauseam. It’s not a bug; it’s a fundamental architectural flaw. And a new paper, published just yesterday, argues this problem is far deeper than any prompt engineering trick can solve.

The Inescapable Bottleneck: Why Language Isn’t Enough

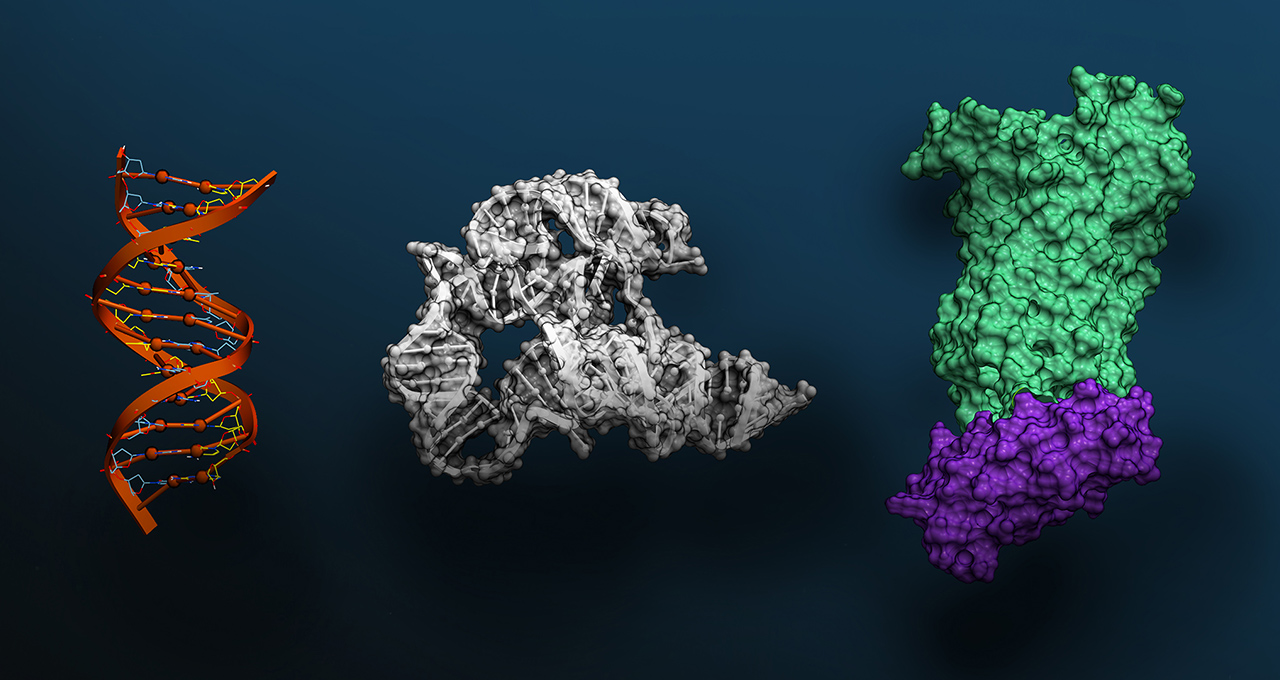

Here’s the stark reality: most sophisticated AI agent systems today are built on a foundation of language, all the way down. When confronted with anything from complex molecular structures to vast tabular datasets, these agents don’t process the raw information natively. Instead, they serialize it—convert it into text. This process, while convenient for language models, is a significant information loss event. Imagine trying to convey the exact shade of a sunset or the subtle texture of silk using only words; the essence, the nuance, the visceral experience is inevitably diluted, if not entirely lost.

The researchers behind the UIUC paper formalize this as an information-theoretic constraint. Serialization, by its very nature, can never add information; it can only preserve what the text format can represent. This means any language-only agent operating on serialized scientific data is inherently bounded in its performance. No amount of chain-of-thought reasoning or clever prompting can recover what the serialization step discarded. It’s a hard, provable limit.

Meanwhile, specialized foundation models have been quietly excelling. Chronos for time series, TabPFN for tabular data, AlphaFold for protein structures, GraphCast for weather—these models speak the native language of their domains. They don’t need to translate a stock market signal into tokens. They operate on it directly, as it was intended. The catch? These specialists often lack a general-purpose language interface. You can’t ask AlphaFold to explain its protein folding predictions in the context of a long-term drug development strategy. They are brilliant specialists, but not conversationalists. This leaves us with a frustrating dichotomy: LLMs can reason but can’t compute faithfully, while specialized models can compute but can’t communicate broadly.

Eywa: Forging Neural Bonds Between AI Specialists

This is where the UIUC paper, titled Heterogeneous Scientific Foundation Model Collaboration, truly shines. The researchers propose a framework called Eywa—named after the interconnected life force in James Cameron’s Avatar—that tackles this problem head-on. Their inspiration: the Na’vi’s Tsaheylu, the neural bond that allows them to coordinate with the unique capabilities of Pandora’s diverse fauna without shared symbolic language.

Eywa applies this concept to AI. The core question the paper grapples with is whether heterogeneous foundation models can collaborate effectively within agentic systems. The answer they propose is a resounding yes, provided there’s an interface layer—a digital Tsaheylu—that allows language models to guide inference without forcing everything through the restrictive text pipeline.

The framework proposes keeping specialists doing specialist work, and giving them a reasoning interface so the LLM can coordinate them.

It’s a profoundly elegant solution: instead of trying to force every AI into a language-shaped box, Eywa advocates for keeping specialized models focused on their strengths. The language model then acts as a conductor, orchestrating these specialists, directing their specialized computations, and integrating their results into a cohesive, understandable output. This isn’t about replacing domain expertise with LLM fluency; it’s about enabling a symbiotic relationship where each component plays to its evolutionary advantage.

This architectural shift promises to unlock a new generation of AI agents, ones capable of truly understanding and interacting with the complex, multi-modal data that underpins our scientific and industrial worlds. The implications for fields like drug discovery, climate modeling, and advanced materials science—where data is rich, heterogeneous, and often non-linguistic—are immense. It’s a move away from the brittle, text-bound agents of today towards a future where AI systems can genuinely collaborate with the world’s data in its native form.

Will This Replace My Job?

Eywa’s architecture suggests a future where AI agents are more like highly skilled collaborators than autonomous decision-makers. Instead of replacing jobs, it could augment them by providing researchers and analysts with more powerful tools to interact with complex data. The focus shifts from performing rote tasks to higher-level strategy and interpretation, areas where human oversight remains critical.

What is Serialization in AI?

Serialization in AI refers to the process of converting complex data structures, such as images, time series, or molecular graphs, into a linear sequence of tokens that a language model can process. This is typically done to allow Large Language Models (LLMs) to “understand” and reason over data that isn’t inherently text-based. However, this conversion often leads to a loss of information and nuance.

How does the Eywa framework differ from traditional LLM agents?

Traditional LLM agents primarily rely on serializing all input data into text before processing. This limits their ability to accurately handle complex, non-textual scientific data. The Eywa framework, inspired by biological neural bonds, creates an interface that allows specialized foundation models (e.g., for time series, chemistry, physics) to collaborate directly with LLMs. This means domain-specific models can process their native data without information loss through text serialization, while LLMs can still guide and interpret their actions, leading to more strong and accurate AI agents.

🧬 Related Insights

- Read more: Claude Mythos Digs Up a 27-Year-Old OpenBSD Bug Humans Slept On

- Read more:

![Eywa: AI Agents Break Free From Text Limits [New Framework] — The AI Catchup](/static/news/img/og-default.png)