DeepSeek V4: Why the $0.04 Model Crushed Pro-Max

Did the $0.04 DeepSeek V4 model just outgun its pricier sibling? We tested 4 modes on 20 real-world tasks, and the results might shock you.

⚡ Key Takeaways

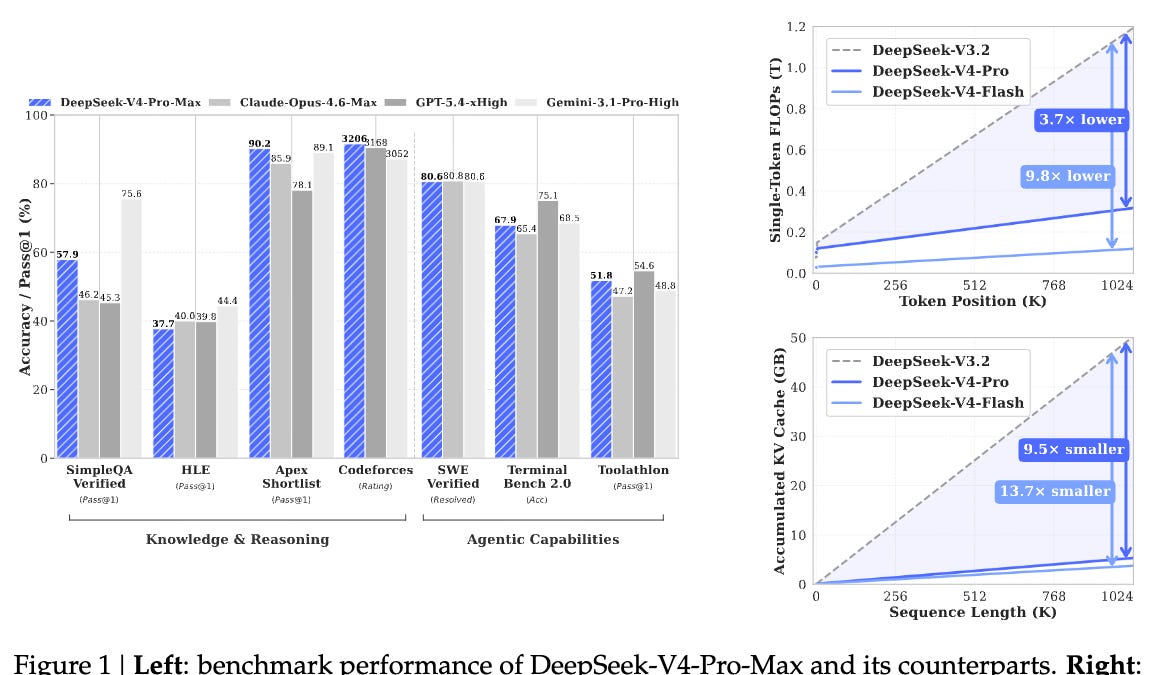

- DeepSeek V4's $0.04/million token 'Flash' mode surprisingly outperformed pricier versions on 7 of 20 real-world tasks. 𝕏

- A "10% KV cache trick" is cited as a key architectural innovation enabling the Flash model's efficiency. 𝕏

- The Pro-Max mode used 4.3x more tokens for a marginal 2-point performance gain, highlighting potential over-engineering and cost inefficiency. 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Towards AI