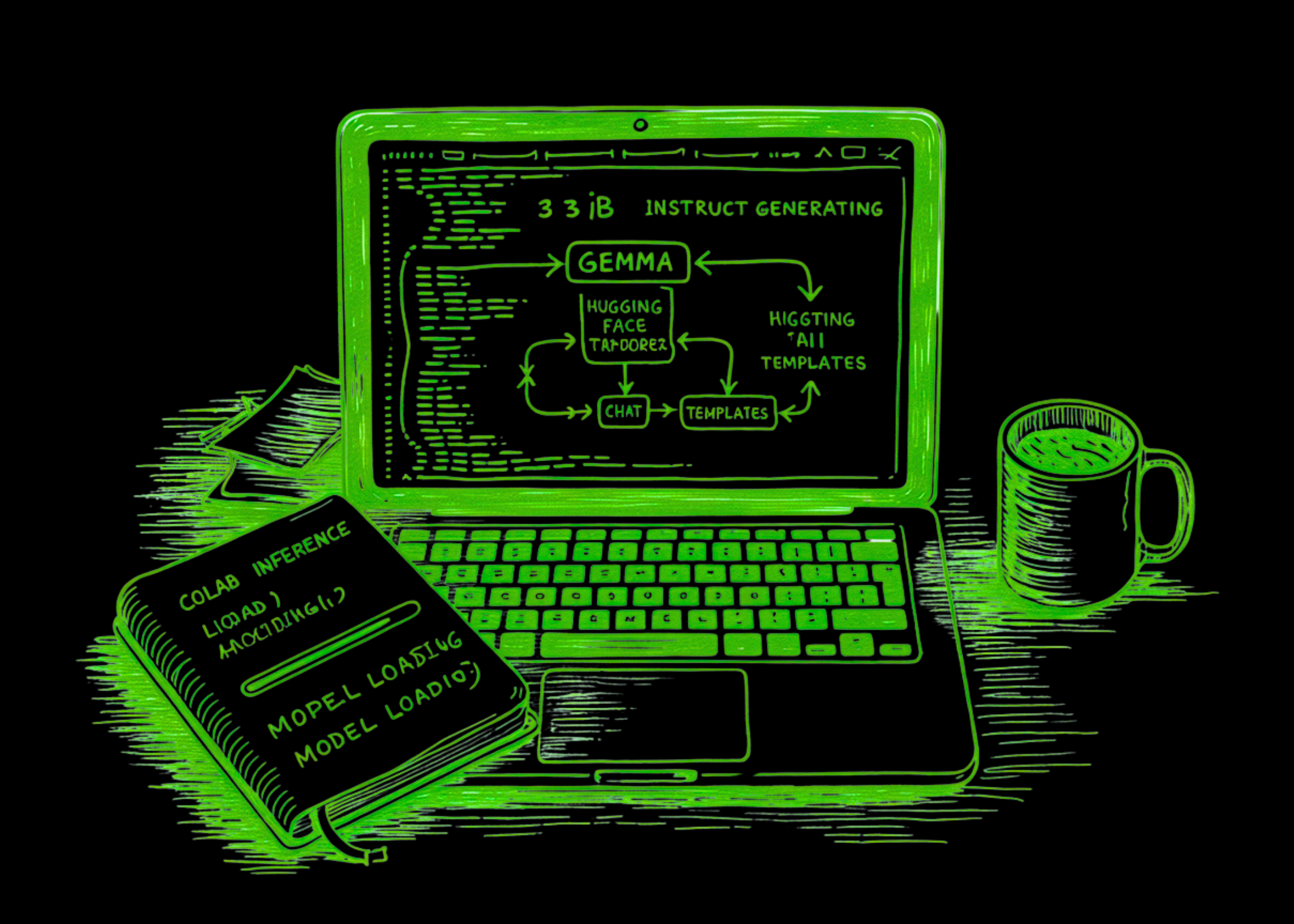

Gemma 3's 1B Model in Colab: Real Pipeline or Just Notebook Toy?

Google's Gemma 3 1B slips into Colab like a hot knife through butter. But is this tiny model your ticket to cheap, local AI—or just a dev distraction?

⚡ Key Takeaways

- Gemma 3 1B loads in Colab seconds, generates JSON reliably at low temp.

- Free pipeline beats API costs for prototyping; scales to edge devices.

- Unique edge: Mirrors ARM's mobile chip revolution for on-device AI boom.

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by MarkTechPost