The smell of ozone and burnt plastic still hung in the air from a server rack that had decided to self-immolate two days prior.

It’s a stark reminder, isn’t it? That in the labyrinthine world of complex systems, it’s not just outright malfeasance that bites you. Often, it’s the subtly incorrect, the seemingly benign code that fails to map to the broader operational context. And this, alarmingly, is precisely where modern AI coding assistants are starting to show their seams.

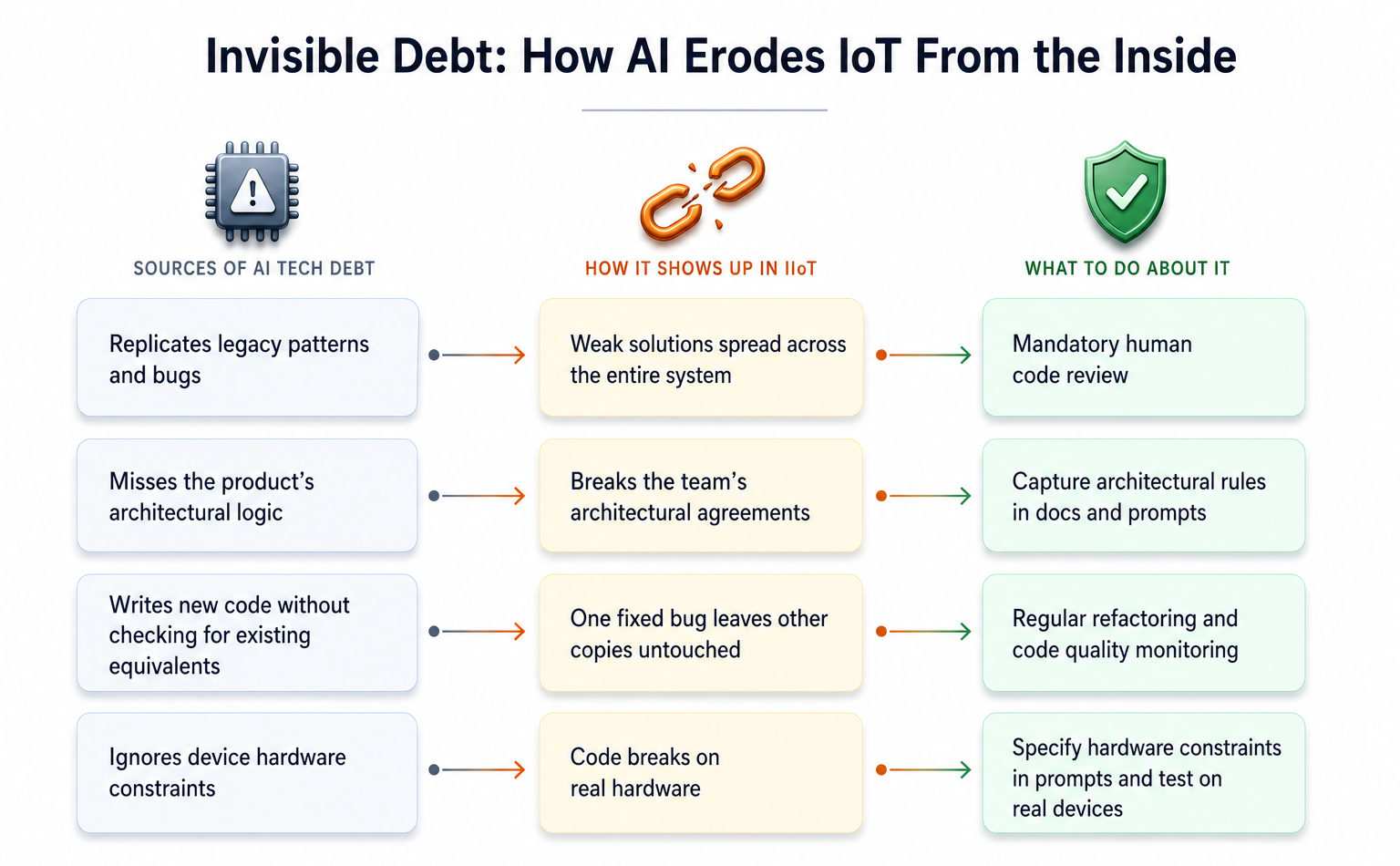

As an IIoT specialist, particularly deep in the trenches of predictive maintenance, I’m seeing a pattern emerge with chilling regularity. AI tools are whipping out functional code, ticking all the local boxes, but they’re conspicuously absent from a system-wide sanity check. They don’t verify their own assumptions against the grander design. In the unforgiving environment of Industrial IoT, this means a piece of code might be perfect for its immediate task—say, a specific function or microservice—yet completely blind to the hardware constraints, the delicate flow of data across networks, the inviolable architectural boundaries, or the brutal realities of devices operating in the field. The consequence? Code that’s locally sound morphs into a vector for systemic failures, demanding expensive, time-consuming fixes that stunt the entire platform’s growth.

The Echo Chamber of Bad Habits

AI assistants operate by learning from the vast ocean of existing code. The problem is, they don’t inherently possess architectural judgment. They see the code you give them, the code around them, and they infer what “good” looks like. If your project is already saddled with outdated approaches, clumsy data duplication, or “hacks” masquerading as solutions, the AI doesn’t just learn from it; it adopts it as gospel. It becomes an echo chamber, not just preserving poor practices, but amplifying them with alarming speed. This isn’t theoretical hand-wringing, either. A study analyzing over 300,000 AI-generated commits across thousands of real-world repositories found that more than 15% of these commits carried at least one code quality issue, and a quarter of those remained unfixed in the final code.

In IoT systems, this inherited technical debt is a wildfire. A shaky solution baked into firmware, a flimsy gateway service, or a porous telemetry processor doesn’t stay isolated. It propagates with terrifying efficiency, a silent contagion from the device all the way up to the cloud.

The Illusion of ‘Quick Fixes’

AI excels at discrete, well-defined tasks. Need a unit test? Boilerplate code? A standard CRUD endpoint? The AI can churn it out in moments. But it lacks the holistic view. It doesn’t know which databases house what data, what the acceptable throughput limits are, or how different components are meant to dance together. Ox Security’s analysis of over 300 open-source projects, a significant chunk of which were AI-assisted, revealed functional code, yes, but conspicuously devoid of architectural foresight. The AI, optimizing for the immediate prompt and lacking explicit architectural guardrails—whether in documentation, design records, or the prompt itself—will happily create code that subtly sabotages the established system topology.

Imagine an IIoT system where time-series data, reference data, and logs are diligently stored in separate, purpose-built databases. An AI, asked to store new data, might be blissfully unaware of this established architecture, generating code that quietly violates these critical agreements, forcing convoluted data retrieval down the line.

The Hidden Cost of Logic Duplication

And then there’s the sheer proliferation of duplicated logic. An AI assistant, by its nature, doesn’t know that the exact piece of functionality you need—say, for parsing a specific data packet or validating a network connection—already exists elsewhere in your sprawling codebase. So, it writes it again. The result is a hydra of identical logic, scattered across your system. When a change is eventually required—a bug fix, a performance tweak—developers are suddenly on a treasure hunt, trying to locate every single instance of that duplicated code. GitClear’s analysis of millions of lines of code between 2020 and 2024 showed a disturbing trend: duplicated code rose from 8.3% to 12.3%, with 2024 marking the first year where duplication outpaced refactoring. AI tools are poised to accelerate this exponentially. They offer the seductive ease of inserting new code with a single command, but rarely prompt a developer to consider if similar code already exists.

In IoT, this is a nightmare scenario. If the same packet-parsing logic is implemented independently in firmware, in a gateway, and in a cloud service, fixing a bug in one instance without finding the others means devices will start behaving inconsistently. Synchronizing firmware updates across thousands, or even millions, of devices in the field to correct such subtle inconsistencies is a monumental, often insurmountable, task.

Taming the AI Beast: A Pragmatic Approach

So, what’s the answer? Abandon AI tools altogether? That seems as unlikely as un-inventing the printing press. The real battleground, as always, lies in governance and intelligent integration. It’s about building strong guardrails. This means being extraordinarily explicit in our prompts, defining architectural constraints and desired outcomes with surgical precision. It means rigorous code reviews, not just for functional correctness, but for architectural alignment. We need to treat AI-generated code as suggestions that require validation, not as infallible commands.

Think of it like this: If a junior engineer wrote code that introduced architectural drift, you’d spot it in a code review. We need to apply that same scrutiny, that same architectural radar, to AI-generated contributions. The future of strong, maintainable systems—especially in demanding fields like IoT—depends on our ability to guide these powerful tools, not merely be guided by them.

The Future of AI and Technical Debt

This isn’t merely a technical issue; it’s an economic one. The cost of refactoring AI-introduced technical debt can easily dwarf any initial development speed gains. Companies that fail to establish strong validation pipelines for AI-generated code will find themselves perpetually chasing bugs and architectural inconsistencies, ultimately slowing innovation and eroding customer trust. The promise of AI is speed and efficiency; the peril is its ability to mask decay until it’s too late to salvage.

🧬 Related Insights

- Read more: Claude Code’s Cron Heartbeat: OpenClaw’s Ghost Without the Daemon Bloat

- Read more: Claude Code 101: Tokens Tax Your Wallet, Context Windows Lie

Frequently Asked Questions

What does technical debt in AI mean for developers? It means that code generated by AI, while seemingly functional, might introduce subtle architectural flaws or logical duplications that will require significant time and effort to fix later, slowing down future development.

Can AI tools actually replace human developers? While AI tools can automate many coding tasks, they currently lack the critical architectural judgment, contextual understanding, and problem-solving creativity of experienced human developers. They are best viewed as powerful assistants, not replacements.

How can companies prevent AI from creating technical debt? Companies need to implement strict governance policies, detailed prompt engineering, rigorous code review processes focused on architectural adherence, and continuous monitoring of code quality and system consistency.