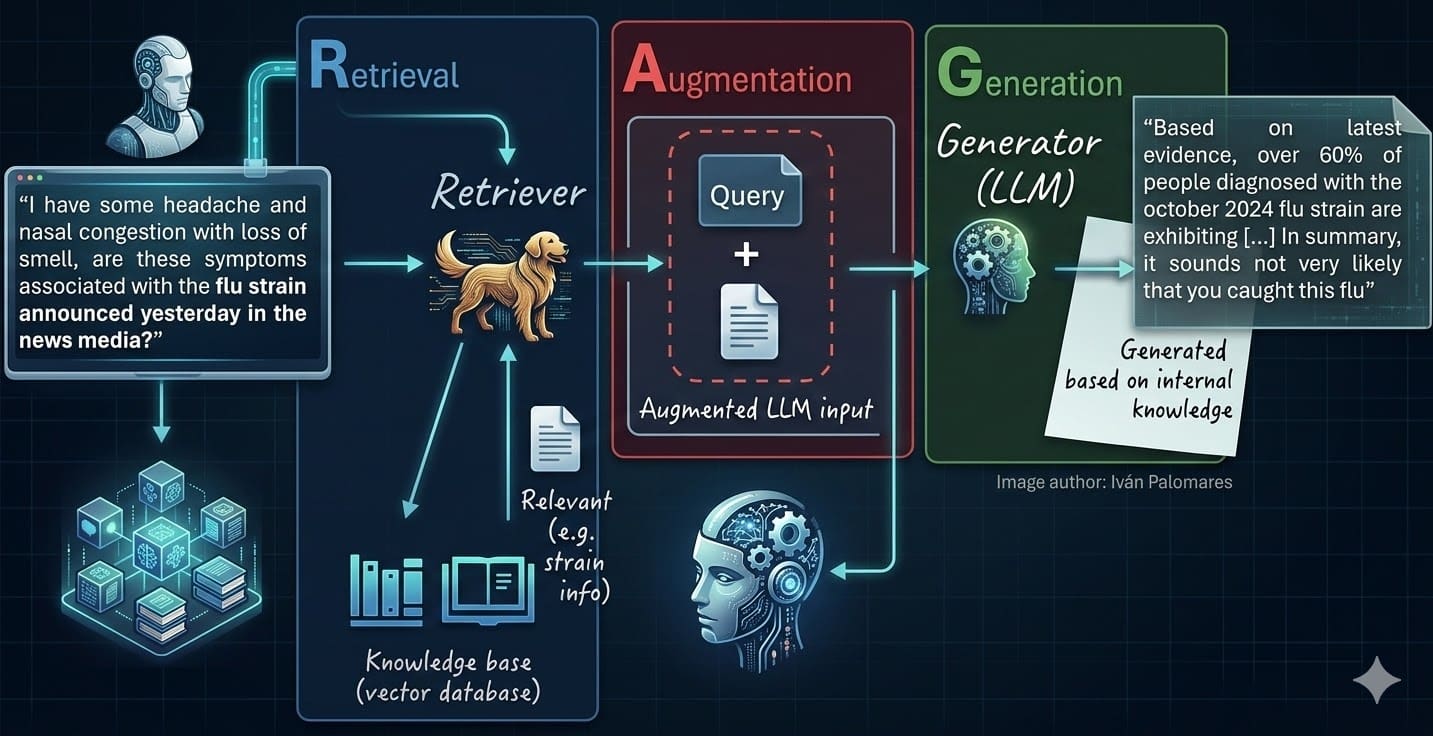

RAG: The Only Thing Keeping Your Enterprise LLM from Total Hallucination Meltdown

Your LLM's bombing on company docs? It's not the model—it's your architecture. RAG fixes that mess, if you don't screw up the basics.

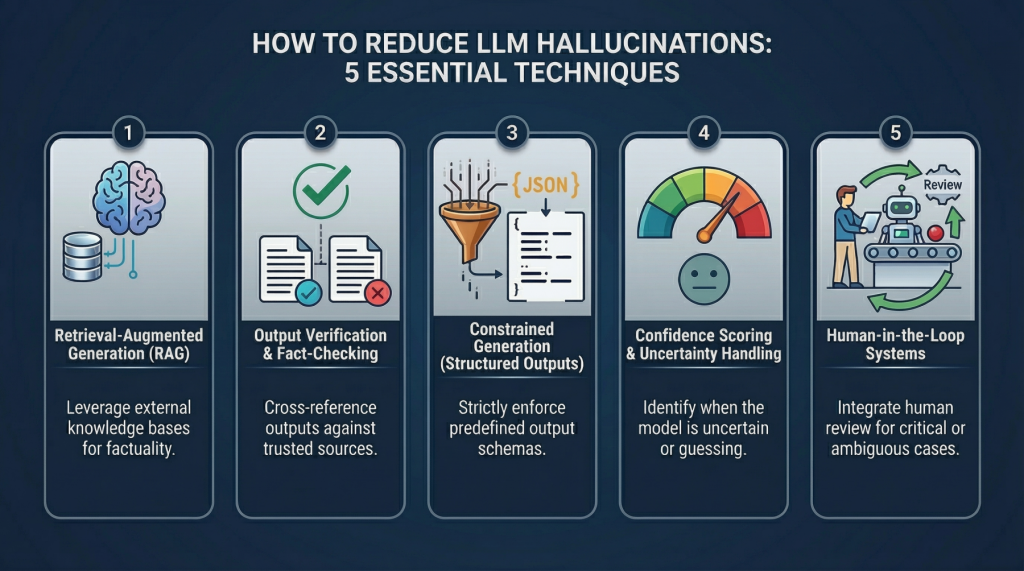

⚡ Key Takeaways

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Towards Data Science