Groq's LLaMA Extracts Features 10x Faster, Lifting Classifier Accuracy 28% on Ticket Data

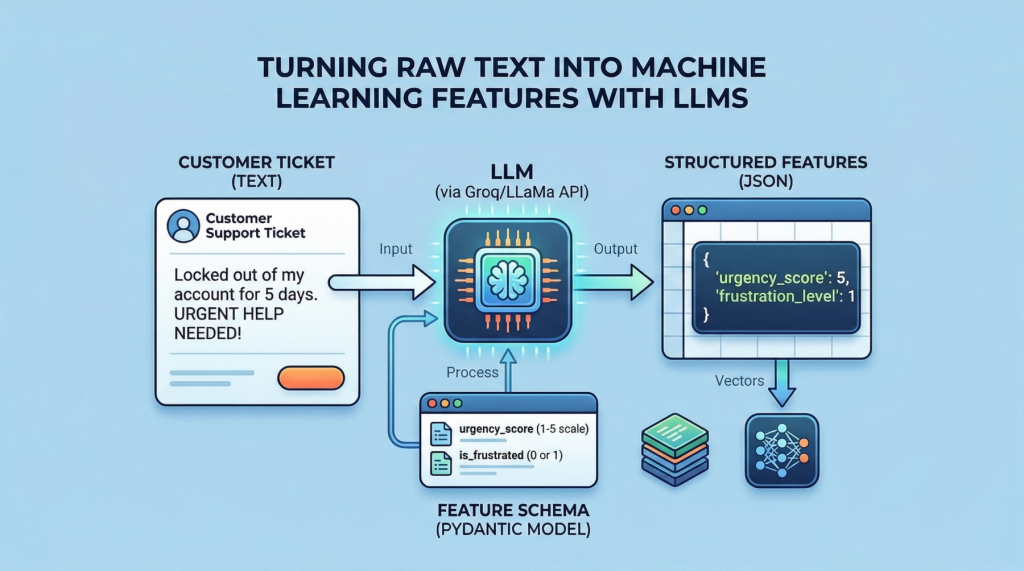

Forget manual text parsing. A simple Groq API call structures messy tickets into features that pump a random forest's accuracy from 72% to over 90%. Here's how — and why it's the future of hybrid ML.

⚡ Key Takeaways

- Groq LLaMA extracts structured features from text at 500+ tokens/sec, slashing preprocessing time.

- Random forest accuracy jumps 28% on hybrid text-numeric ticket data vs. baselines.

- Pydantic schemas + OpenAI-compatible APIs make this plug-and-play for any tabular ML pipeline.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Machine Learning Mastery