DenseNet's Wild Web of Connections: Rewiring Deep Learning's Backbone

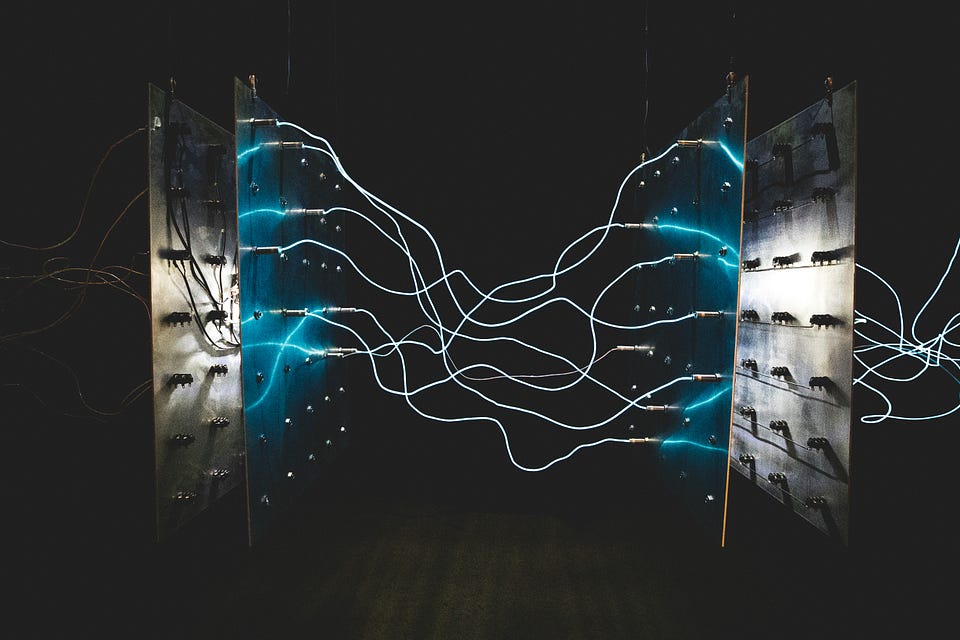

Picture this: gradients vanishing in a 100-layer CNN nightmare. DenseNet doesn't skip layers — it connects every damn one, slashing parameters while boosting flow.

⚡ Key Takeaways

- DenseNet's all-to-all connections explode links to L(L+1)/2, nuking vanishing gradients.

- Concat over sum preserves features; param savings hit 4x vs traditional CNNs.

- Prefigures transformer attention; poised for edge AI revival.

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Towards Data Science