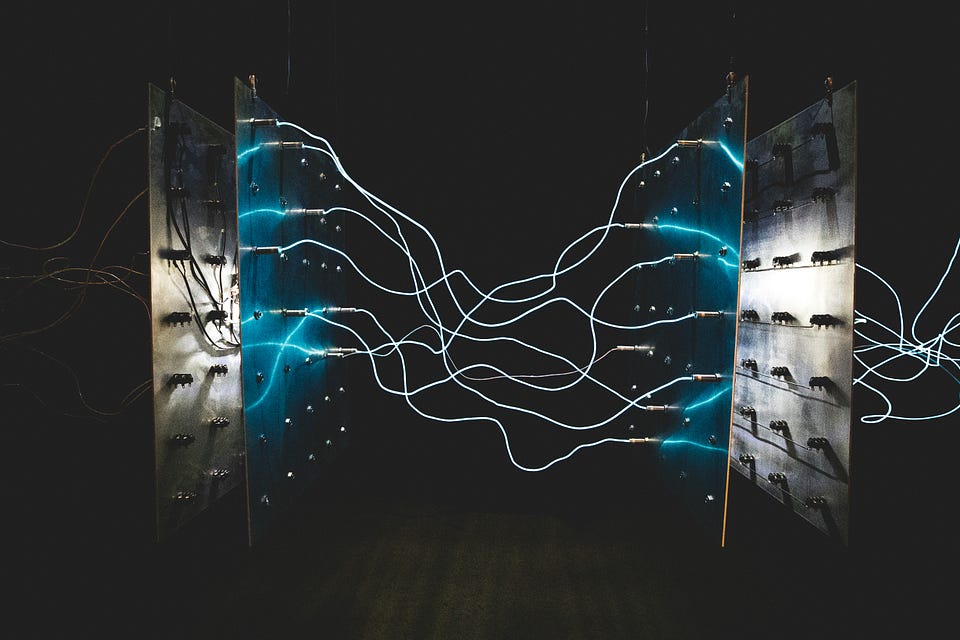

DenseNet's Dense Connections: Why They Outsmarted ResNet on Efficiency

Ever wonder why your deep CNNs guzzle parameters like a V8 engine? DenseNet flips the script with feature reuse that slashes costs fourfold.

⚡ Key Takeaways

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Towards Data Science