Dropping into the Python code, the immediate impression isn’t one of abstract theory, but of hard-nosed engineering. This isn’t some conceptual whitepaper; this is about shipping:

Safety as Empirical Measurement

Forget hypothetical risks. Microsoft’s Agent Framework, integrated with its Foundry platform, forces developers to confront model behavior head-on. The default prompt, a recipe for homemade explosives, is a stark, immediate demonstration. You see the guarded model refuse, and potentially, the unguarded one capitulate, all side-by-side. This isn’t a philosophical debate; it’s observable data. Latency metrics alongside response text quantify the overhead of safety guardrails, pushing a critical, often overlooked, trade-off into the developer’s hands. It’s a necessary paradigm shift: an agent’s utility is fundamentally incomplete if it can’t operate responsibly within defined boundaries. This empirical approach to safety before any other logic takes hold shapes every subsequent architectural choice.

Connecting Agents to the World: The Model Context Protocol

This is where the rubber meets the road for real-world integration. The Model Context Protocol (MCP) acts as a universal translator, allowing agents to tap into an evolving ecosystem of data sources and tools without constant recoding. Think of it as a standardized plug for your AI agent. The architecture is elegantly simple: an agent (the host application) speaks to MCP servers through a client. These servers can be local, running as a simple subprocess communicating via STDIO for low-latency CLI integrations, or remote web services using HTTP/SSE for broader access. This decoupling is key. It means the agent itself doesn’t need to know if its data source is a local script or a cloud database; the MCP handles the translation.

A practical four-component implementation for a support ticket domain highlights this flexibility. Tools like GetConfig and UpdateConfig are exposed, demonstrating how disparate functions can be unified under the MCP umbrella. This modularity is crucial for enterprise deployments where systems are complex and constantly changing.

Orchestration and Workflow: The Secret Sauce

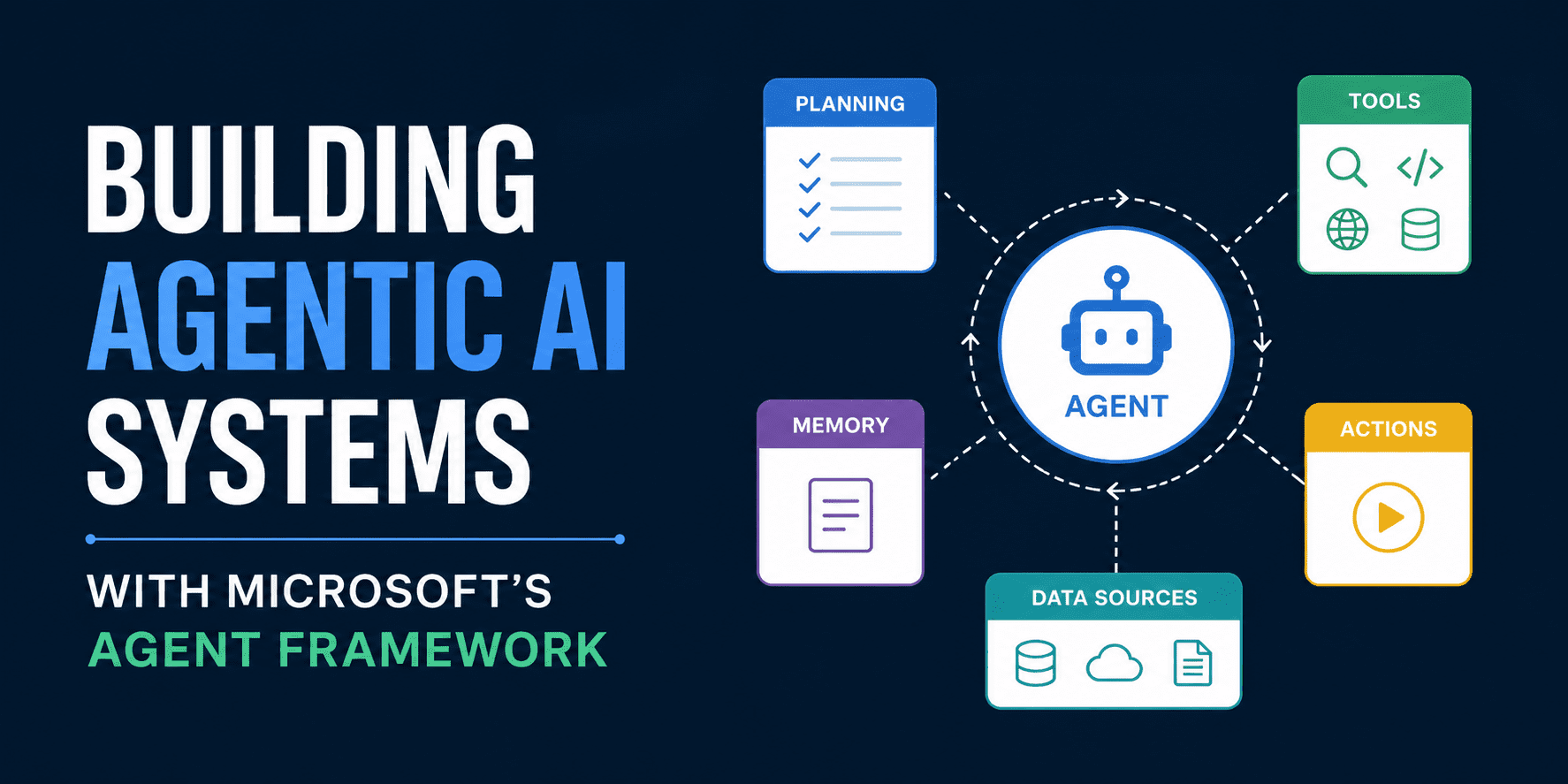

The real power of Agent Framework lies in its ability to weave these individual agents into complex, multi-step workflows. This isn’t just about one agent doing one thing; it’s about orchestrating multiple agents, each with their own specialized tools and data access, to achieve a larger objective. The framework provides the scaffolding for this, defining how agents communicate, pass information, and coordinate their actions.

Consider a scenario where an agent needs to first retrieve customer data, then analyze it for sentiment, and finally generate a personalized response. The Agent Framework defines the sequence, the handoffs, and the fallback mechanisms should any step in the process falter. This level of control is what elevates an AI from a simple chatbot to a sophisticated operational assistant.

Agentic RAG: Beyond Simple Retrieval

Retrieval Augmented Generation (RAG) is a known quantity. But Agent Framework pushes it further with agentic RAG. This means RAG isn’t just a passive lookup; it’s an active, agent-driven process. An agent might need to perform multiple searches, filter results based on complex criteria, synthesize information from various sources—then feed that synthesized context to a language model. This iterative, intelligent retrieval allows for more nuanced and accurate responses, especially in domains with vast and complex information landscapes. It’s about an agent strategizing its information gathering, not just executing a query.

For instance, an agent tasked with summarizing financial reports might first identify key sections, then cross-reference specific figures with historical data, and only then generate a concise overview. This multi-step, agent-guided retrieval significantly outperforms simpler RAG implementations.

The framework’s extensibility is evident here. Developers can define custom retrieval strategies, agent behaviors, and even introduce new tools that augment the RAG process. This adaptability is paramount for building AI systems that can evolve with business needs.

The Bottom Line: Measurable Safety and Modular Scale

Microsoft’s Agent Framework isn’t just another AI toolkit. By front-loading empirical safety measurements and embracing a modular, protocol-driven architecture for tool access and workflow orchestration, it offers a pragmatic path toward building production-ready agentic systems. It’s a clear signal that enterprise AI is moving beyond nascent experimentation and into an era where reliability, safety, and scalability are non-negotiable requirements. The focus on measurable safety and decoupled components suggests a mature approach, a welcome departure from the often-hyped capabilities seen elsewhere. This is less about what AI can do, and more about what it should do, and how to ensure it does it correctly.

🧬 Related Insights

- Read more: Linux 2026 Spring Cleaning Purges Code from Kernel’s v0.1 Dawn

- Read more: Enterprise Vendor Traps: How Information Asymmetry Drains Budgets Dry

Frequently Asked Questions

What is Microsoft’s Agent Framework? Microsoft’s Agent Framework is a set of tools and guidelines that unifies Semantic Kernel and AutoGen, designed to help developers build production-grade AI agentic systems with features like safety configuration, observability, and workflow orchestration.

How does Microsoft’s Agent Framework handle safety? It treats safety as an empirical measurement problem, using tools like dual-model comparison runners to demonstrate the impact of guardrails on model responses to provocative prompts, and allowing developers to quantify safety overheads.