🔧 AI Hardware

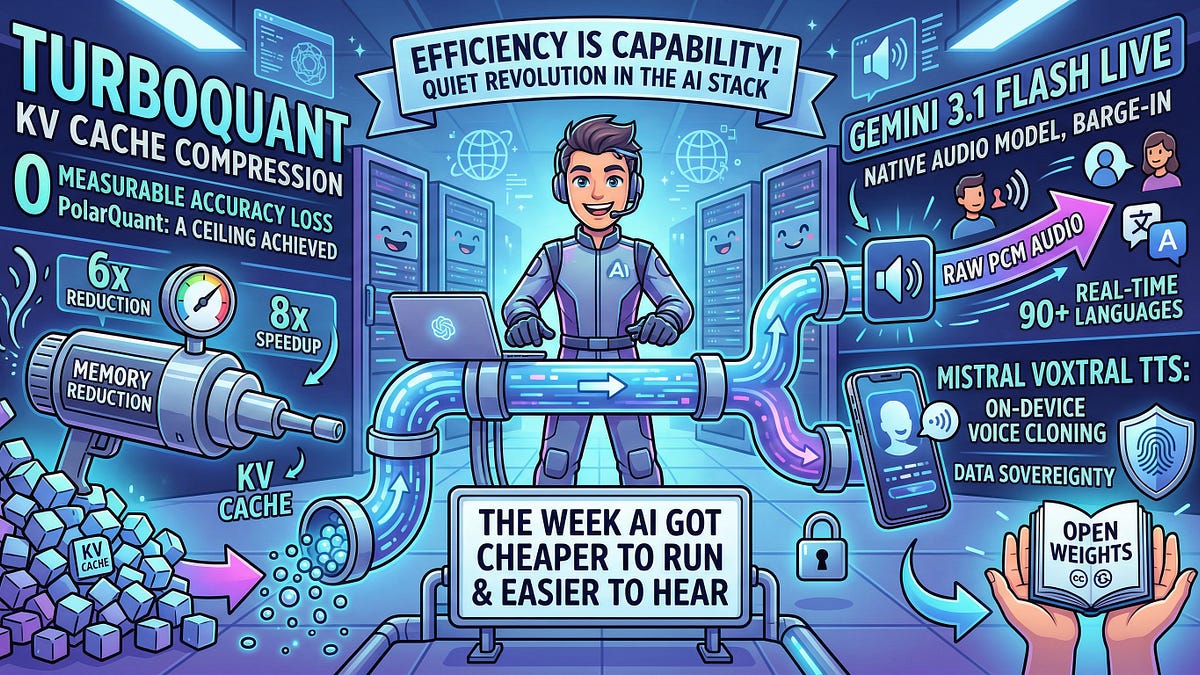

TurboQuant Crushes KV Cache Memory on Apple Silicon

Apple Silicon just got a memory boost that LLMs crave. TurboQuant's 5x KV cache squeeze on MLX changes the game for on-device inference.

theAIcatchup

Apr 09, 2026

3 min read

⚡ Key Takeaways

-

TurboQuant achieves 5x KV cache compression on Apple Silicon via MLX, tackling LLM memory bottlenecks.

𝕏

-

Apple's unified memory architecture amplifies quantization efficiency over discrete GPUs.

𝕏

-

Expect native 1M-token contexts on M-series chips by 2025, revolutionizing on-device AI.

𝕏

The 60-Second TL;DR

- TurboQuant achieves 5x KV cache compression on Apple Silicon via MLX, tackling LLM memory bottlenecks.

- Apple's unified memory architecture amplifies quantization efficiency over discrete GPUs.

- Expect native 1M-token contexts on M-series chips by 2025, revolutionizing on-device AI.

Published by

theAIcatchup

AI news that actually matters.

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.