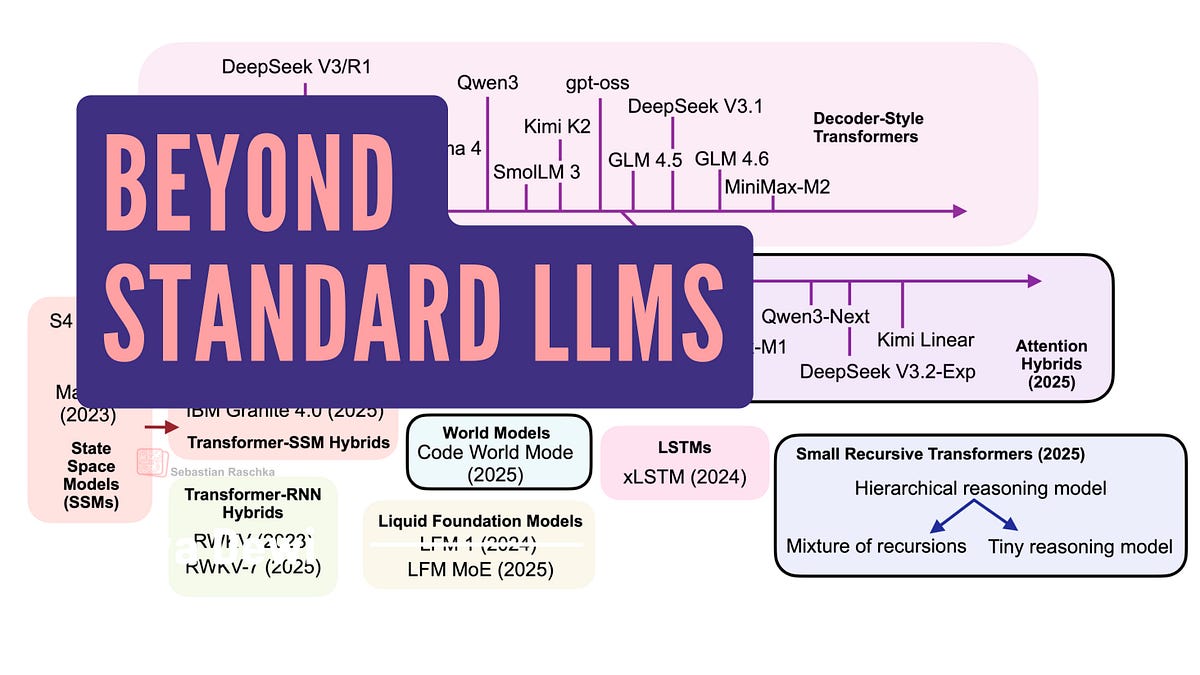

Linear Attention Rebels: The LLM Shakeup Transformers Can't Ignore

Picture your AI zipping through novels like a bullet train, not crawling in traffic jams. Linear attention hybrids are dethroning transformers' quadratic tyranny.

⚡ Key Takeaways

- Linear attention hybrids slash quadratic costs, enabling million-token contexts.

- Text diffusion and code world models rethink generation for creativity and simulation.

- Small recursive transformers power edge AI, predicting a decentralized future.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Ahead of AI