AI Agents That Know When to Forget: Deep Agents' Autonomous Context Magic

Picture this: your AI coding buddy doesn't drown in old chit-chat — it smartly prunes its own memory. Deep Agents just made agents way more human-like in handling overload.

⚡ Key Takeaways

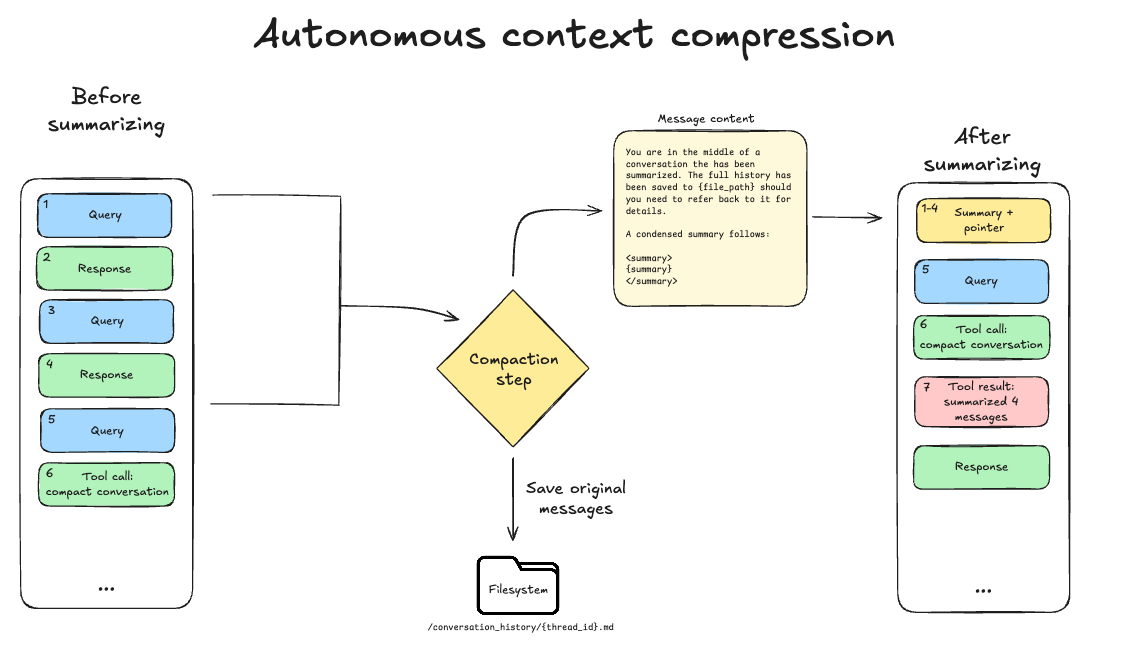

- AI agents now self-trigger context compression at smart moments, dodging rot without human help.

- Inspired by 'bitter lesson,' this hands control to models over rigid harness rules.

- Unlocks marathon workflows; bold bet on infinite-run agents via self-management.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by LangChain Blog