Attention as Gibbs Distribution: Neat Math Trick or Transformer Revelation?

Physicists are invading AI again, swearing attention mechanisms are secretly Gibbs distributions. Proof dropped — but is it profound or just probabilistic poetry?

⚡ Key Takeaways

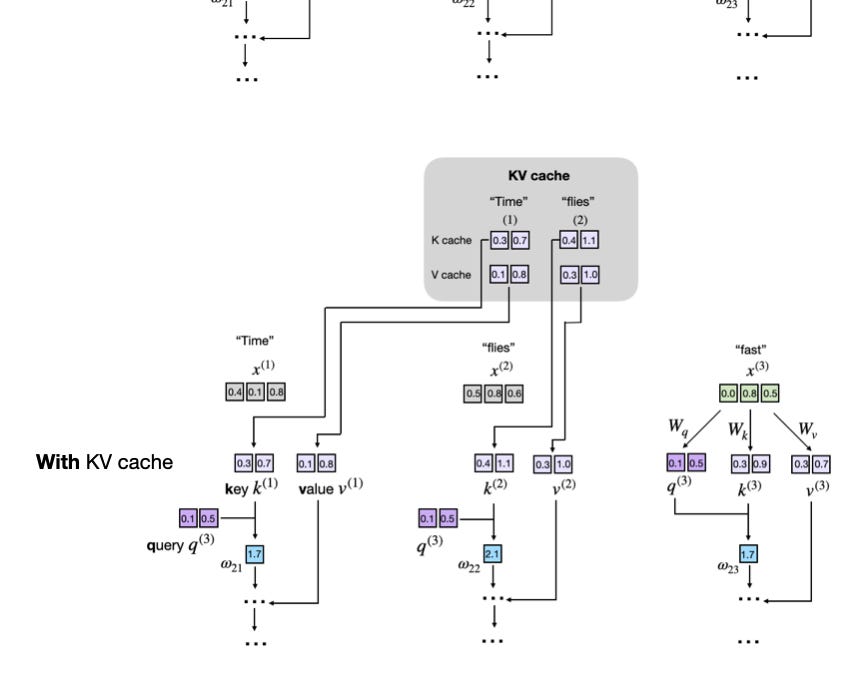

- Attention weights are mathematically identical to a Gibbs distribution with energies from query-key similarities. 𝕏

- This is a rediscovery, echoing 1980s energy-based models like Boltzmann machines — not revolutionary. 𝕏

- Hype over substance: elegant theory, zero practical impact on current transformer deployments. 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Towards AI