Autoresearch: AI's Tentative Toddle Toward Self-Training

Andrej Karpathy lets agents loose on nanochat—and they actually speed things up. A tiny spark of recursion, or fool's gold in the AGI chase?

⚡ Key Takeaways

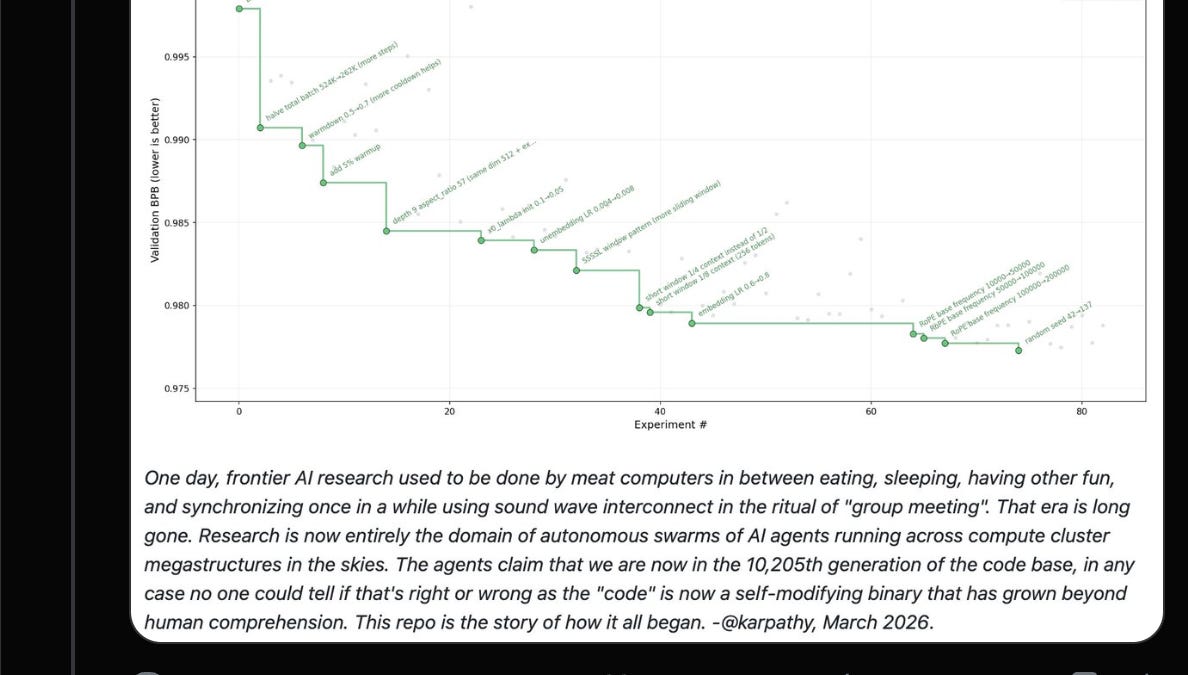

- Karpathy's autoresearch nets 11% faster training on nano models via agent tweaks.

- Verification, not generation, chokes self-improving loops—echoing 2010s AutoML failures.

- Vibe training lets humans offload bugs, but full recursion stalls without trusted judgment.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Latent Space