AI Model Evaluation and Benchmarking: How to Measure AI Performance

Measuring AI performance requires the right metrics and benchmarks. This guide covers evaluation methodology from basic metrics to comprehensive benchmarking strategies.

⚡ Key Takeaways

- {'point': 'No single metric is sufficient', 'detail': 'Effective AI evaluation requires multiple complementary metrics covering accuracy, robustness, fairness, and efficiency to capture different dimensions of model quality.'} 𝕏

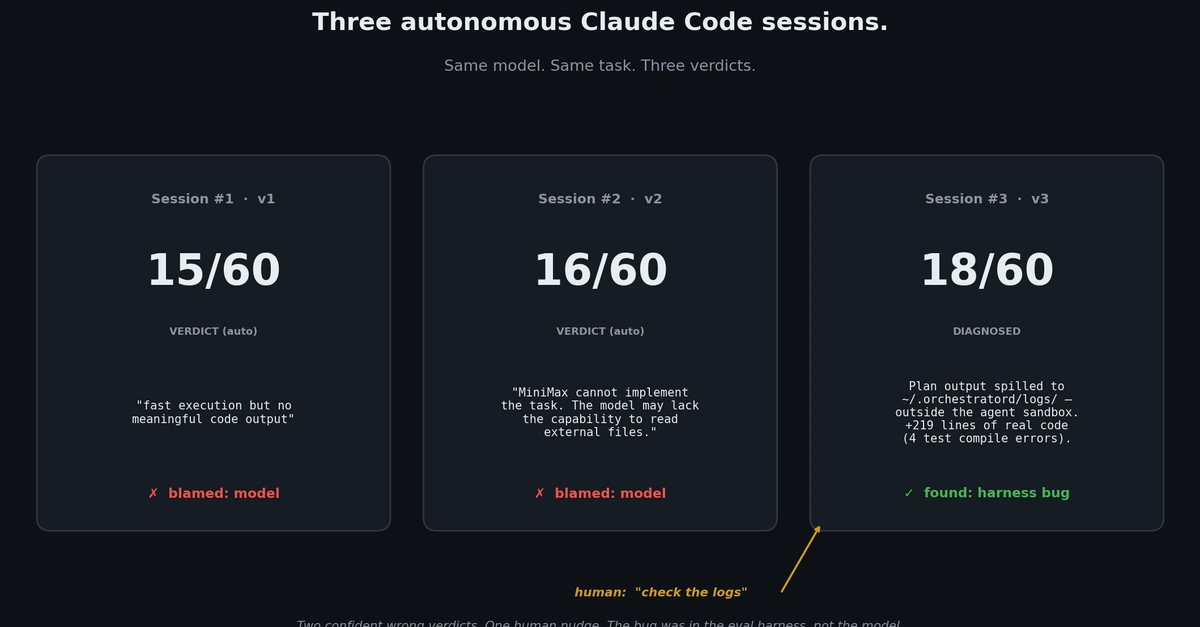

- {'point': 'Benchmarks have real limitations', 'detail': 'Popular benchmarks enable standardized comparison but are vulnerable to overfitting, and strong benchmark performance does not guarantee real-world utility.'} 𝕏

- {'point': 'Human evaluation remains the gold standard', 'detail': 'For open-ended AI tasks, human evaluation and LLM-as-judge approaches capture quality dimensions that automated metrics miss entirely.'} 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.