45 LLM Architectures Walked Into a Gallery—Most Won't Survive the Hype Cycle

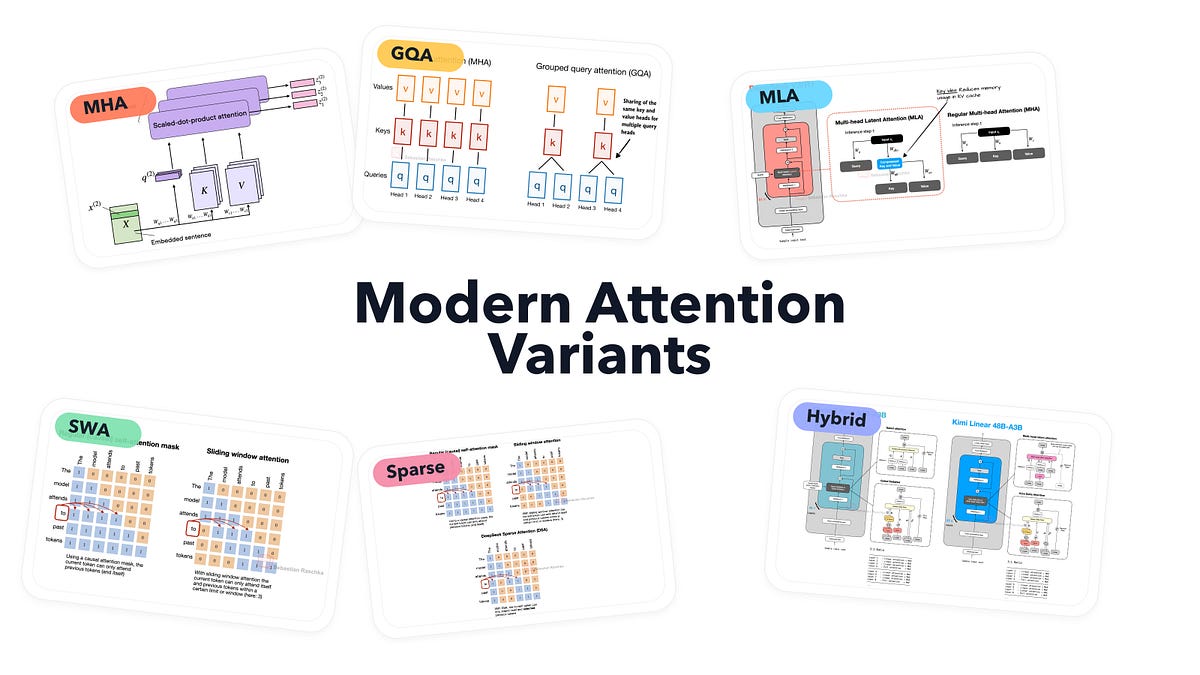

A single gallery crams 45 LLM architectures into visual model cards, from vanilla multi-head attention to exotic sparse hybrids. But after 20 years in this game, I gotta ask: are these tweaks genius or desperate compute bandaids?

⚡ Key Takeaways

- 45 architectures cataloged, but most attention variants are efficiency hacks, not breakthroughs.

- GQA and sparse attention slash memory—great for inference, but hardware giants profit most.

- History repeats: attention tweaks echo 90s kernel hype; expect convergence by 2026.

🧠 What's your take on this?

Cast your vote and see what theAIcatchup readers think

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Ahead of AI