21 Models in a Pipeline: The Grubby Reality Behind Knowledge Graph Quality

Legal text chews up massive LLMs. But cram 21 models into a pipeline? Suddenly, knowledge graph quality soars—with a fraction of the compute. Here's the cynical breakdown.

⚡ Key Takeaways

- 21-model pipelines outperform single giant LLMs for knowledge graph quality, especially on structured text like legal docs. 𝕏

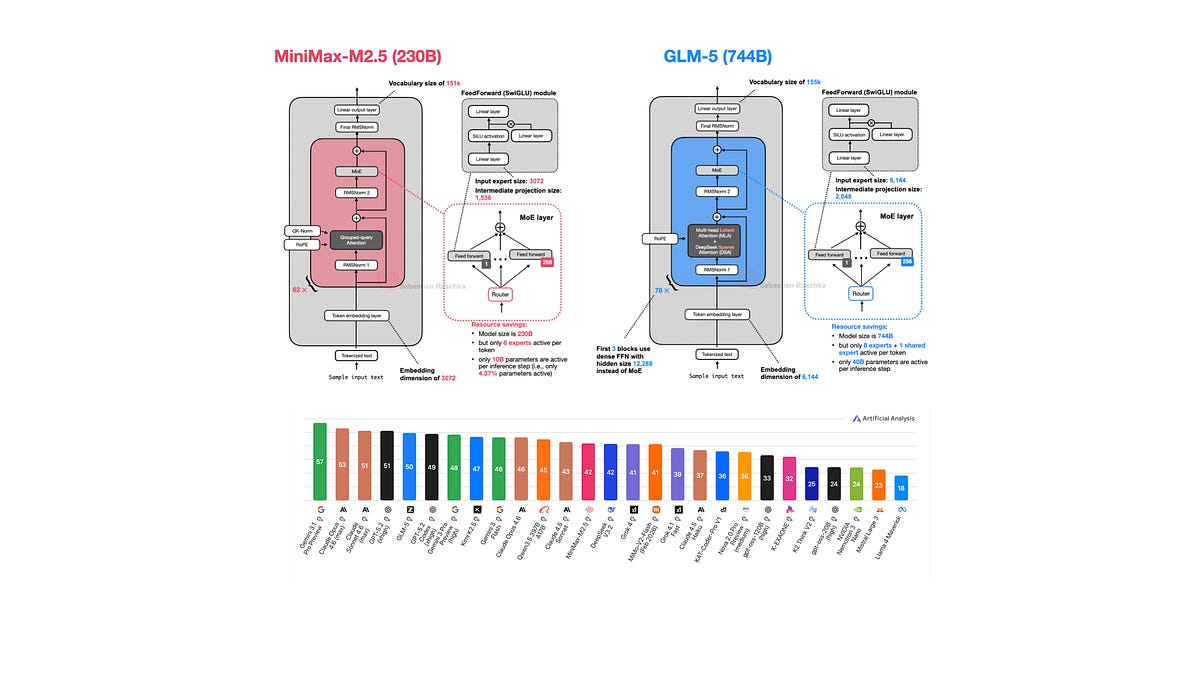

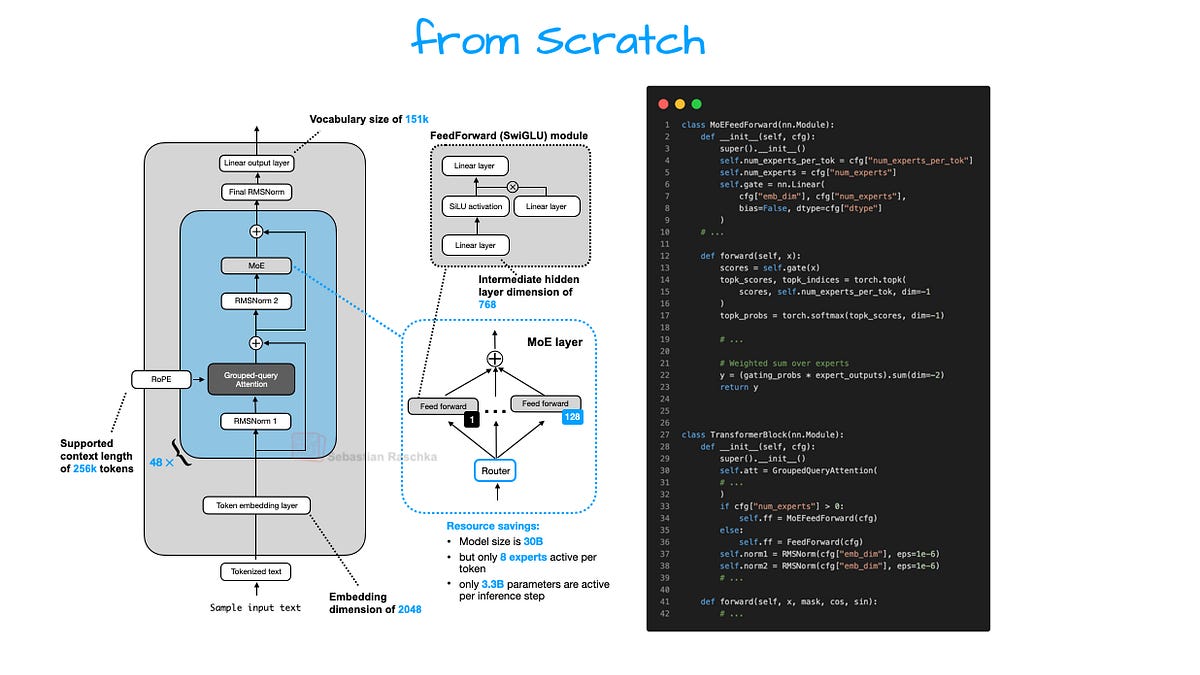

- MoE architectures enable smaller models to punch above their weight via expert routing. 𝕏

- Real winners: graph DB vendors and enterprise toolmakers, not pure AI hype chasers. 𝕏

Worth sharing?

Get the best AI stories of the week in your inbox — no noise, no spam.

Originally reported by Towards AI